Every product decision you make is a bet. You're betting that the feature you're building, the bug you're fixing, or the redesign you're shipping actually matters to the people using your product. Voice of Customer (VoC) is how you stop guessing and start making those bets with real evidence behind them.

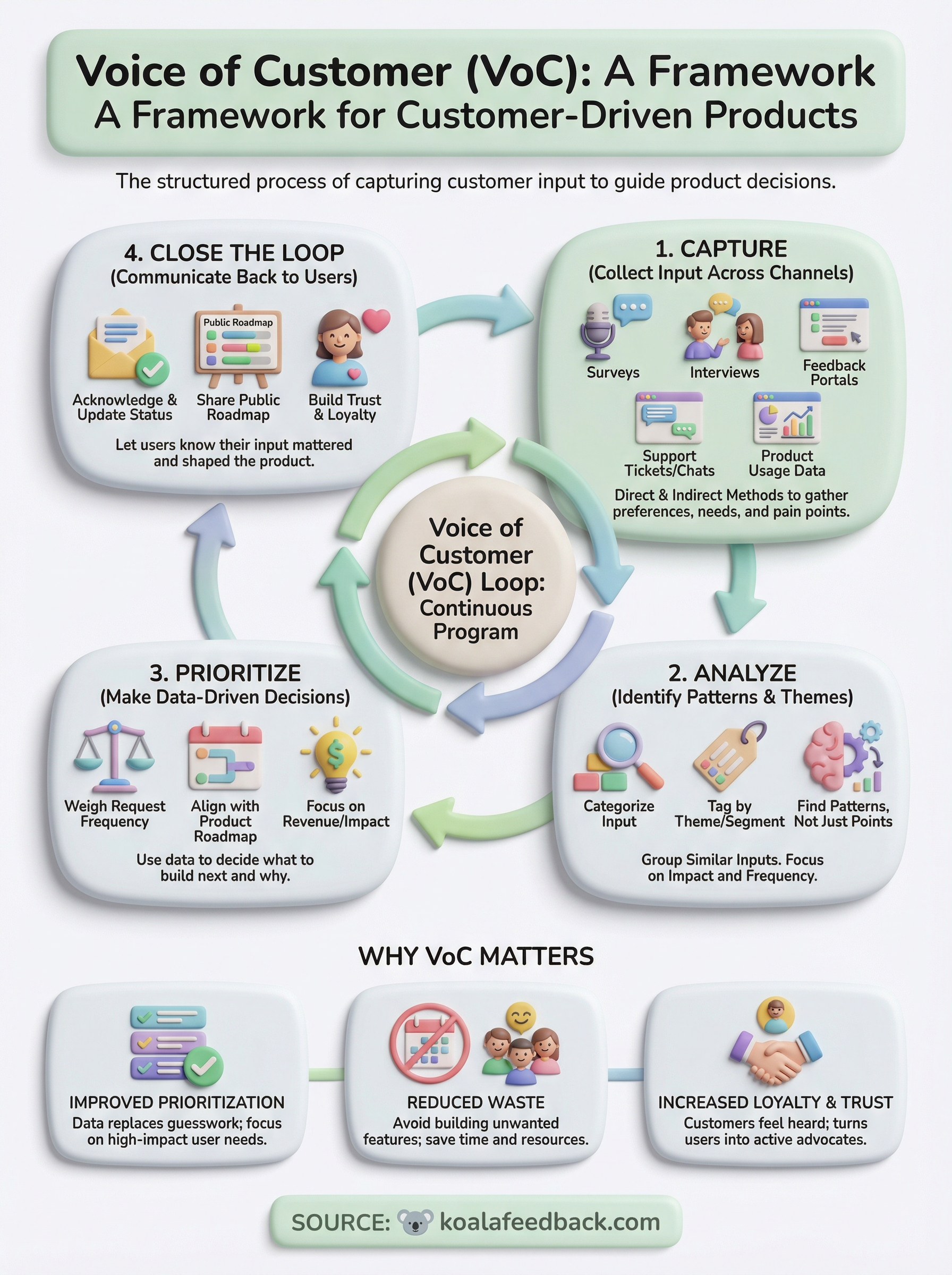

VoC is the structured process of capturing what your customers think, feel, need, and expect, then turning that raw input into actionable direction for your product and business. It covers everything from how you collect feedback to how you analyze it, prioritize it, and close the loop with users. Done well, a VoC program becomes the backbone of customer-driven product development. Done poorly (or not at all), you end up building features nobody asked for while ignoring the ones they're begging for.

At Koala Feedback, we build tools that help teams collect, organize, and act on user feedback, so VoC isn't just a concept we write about. It's the core of what we do every day. This article breaks down what Voice of Customer actually means, walks through the process of building a VoC program, and shares practical examples you can apply to your own product team. Whether you're starting from scratch or refining an existing feedback process, you'll walk away with a clear framework to work from.

Understanding what is voice of customer is step one. Knowing why it matters enough to build a real system around it is what separates product teams that grow from those that stagnate. Without a structured way to hear from users, you're making product decisions based on guesswork and internal assumptions, neither of which reflect what real customers experience day to day. VoC gives you a direct line to user reality, and that line pays off in ways that touch your revenue, retention, and product quality all at once.

When you skip a formal VoC process, decisions don't stop; they just get made with worse information. Product teams without structured customer input tend to over-prioritize features that look good in roadmap presentations but don't actually solve user problems. They ship things users didn't ask for, deprioritize things users desperately need, and then spend months trying to diagnose why retention is flat or why support tickets keep piling up.

The gap between what a team thinks users want and what users actually want is where most product failures begin.

This pattern also damages user trust in a less obvious but costly way. When customers submit feedback, request features, or report problems and hear nothing back, they don't quietly accept it. They churn, or they talk. Negative reviews, community complaints, and low NPS scores are often the downstream symptoms of a feedback process that never closes the loop. By the time those signals show up in your data, you've already lost ground.

One of the clearest advantages of a strong VoC program is what it does to your prioritization process. Instead of debating which feature to build next based on internal opinions, you have data. You know which requests come up most often, which user segments are making them, and how much those segments contribute to revenue. That changes the conversation from "what does the team think is important" to "what do the users who drive our business actually need," and those are very different conversations.

Structured feedback also reduces costly waste. When you collect and analyze VoC data before committing to a build, you catch misalignments early, when they're still cheap to fix. You avoid spending a full development sprint on a feature only to discover in user testing that nobody wants it the way you built it. Even a short feedback loop before scoping a feature can save weeks of rework on the back end.

Customers who feel heard stay longer. When users see their feedback acknowledged, responded to, and eventually built into the product, they develop a sense of ownership over it. They stop being passive users and start becoming active advocates. That shift matters more than most teams realize, because advocates don't just stick around, they bring others with them.

Your VoC program also gives you something concrete to communicate back to your users. Sharing a public roadmap that reflects what users asked for signals that you take their input seriously. It turns what could be a one-way survey into an ongoing relationship. Users know their input shaped what you're building next, and that transparency builds the kind of trust that no amount of marketing spend can replicate. When users trust that their voice shapes your product, they invest in it, and in you.

Before building any system, you need a clear definition to anchor it. Voice of Customer refers to the structured discipline of capturing customer preferences, expectations, and pain points, then translating that raw data into decisions that shape your product, service, or business. It isn't just reading survey responses or collecting NPS scores. VoC is a continuous program that spans collection, analysis, prioritization, and response, and it operates across every touchpoint where customers interact with your company.

When people ask what is voice of customer, they often expect a one-sentence answer, but the full picture is broader. VoC encompasses every method your team uses to gather direct and indirect customer input, from formal research like interviews and surveys to passive signals like product usage data and support tickets. It also includes the internal processes you use to interpret that input, route it to the right teams, and act on it in a way customers can eventually see and recognize.

VoC is not a project you complete. It's an ongoing conversation between your product and the people who use it.

Scope matters here. A VoC program isn't limited to your support team or your product team in isolation. It cuts across sales, customer success, marketing, and engineering, because each function both generates and consumes customer insight. A sales rep who hears the same objection from ten prospects in a month is collecting VoC data. The product manager who reviews that data and adjusts the roadmap is acting on it, and both steps are equally part of the program.

Getting familiar with the vocabulary helps you communicate clearly across your team and set up your program with the right metrics from the start. Here are the terms that appear most frequently in VoC work:

Knowing these terms lets you design your program with precision and choose the right measurement approach for each stage of the customer journey rather than defaulting to whatever metric is easiest to track.

Once you understand what is voice of customer at a conceptual level, the next challenge is building the collection infrastructure that actually feeds your program with data. Most teams already have more collection opportunities than they realize; the problem is they're scattered, inconsistent, and rarely connected to a central place where they can be analyzed together. Choosing the right channels depends on where your users spend time, what kind of feedback you need, and how much friction you're willing to add to the customer experience.

The best VoC collection systems meet customers where they already are rather than asking them to jump through extra steps.

Direct channels are the ones where you explicitly ask customers for input. In-product surveys placed at key moments in the user journey, such as right after a user completes a core task or reaches a milestone, capture feedback when the experience is still fresh. Customer interviews go deeper: a 30-minute call with a segment of your users can surface problems and motivations that no survey ever would, because you can follow up, ask for examples, and watch how people respond when they explain something in their own words.

Feedback portals represent another high-value direct channel. When you give users a dedicated space to submit ideas, vote on existing requests, and comment on feature suggestions, you collect both qualitative input and quantitative signal in one place. The voting data alone tells you which pain points carry the most weight across your user base, without you having to interpret anything.

Not all VoC data comes from questions you ask. Support tickets and chat transcripts are among the richest passive sources available because customers write them when they're motivated by a real problem, not a survey invite. Reviewing these regularly reveals patterns you'd never catch otherwise. A spike in tickets around a specific workflow almost always signals a usability issue your team hasn't formally identified yet.

Product usage data adds another layer. If a feature has low adoption despite high demand in feedback, that disconnect tells you something important about implementation, discoverability, or both. Pairing behavioral data with what customers say in reviews, community forums, and support conversations gives you a fuller picture than either source provides on its own. The goal is to triangulate across channels so no single data point carries more weight than it deserves.

Collecting feedback is only the first half of any serious voice of customer program. The second half, where most teams fall short, is turning that raw input into decisions you can act on and communicating those decisions back to the people who gave you the feedback in the first place. Skipping either step leaves your VoC program incomplete and, over time, trains your users to stop sharing input because they never see it go anywhere.

Raw feedback arrives in many forms: short survey responses, long support emails, brief vote totals, and one-line feature requests. Your first job is to group similar inputs into themes so you can see patterns rather than individual data points. Tagging feedback by product area, user segment, and request type lets you identify which problems affect the most users and which segments are raising the same issues repeatedly.

The goal isn't to read every piece of feedback in isolation. It's to find the patterns that only become visible when you look at everything together.

Once you've categorized your data, prioritization comes down to impact and frequency. A request that appears across multiple user segments and ties directly to a core workflow deserves more weight than an edge case raised once. Pairing that qualitative signal with usage data, such as drop-off rates or feature adoption metrics, gives you a stronger rationale for what to build next and makes it easier to defend those decisions to your team and stakeholders.

Understanding what is voice of customer at a deeper level means recognizing that the feedback cycle isn't complete until users know what happened with their input. Closing the loop doesn't require a personal response to every submission. It requires a reliable system for communicating progress, whether that's updating the status of a feature request, publishing a roadmap that reflects what's planned, or sending a notification when a requested feature ships.

Teams that close the loop consistently build something valuable over time: a reputation for actually listening. Users who see their requests acknowledged and eventually built into the product become invested in your success. They submit more thoughtful feedback, refer others, and give you the benefit of the doubt when something goes wrong. Closing the loop transforms a one-way data collection exercise into an ongoing relationship that pays off across retention, product quality, and growth.

Seeing what is voice of customer in practice makes the concept far easier to apply to your own situation. The examples below cover different business contexts so you can identify which patterns map most directly to your product and team, and the templates give you a ready-made starting point.

A VoC program doesn't need to be complex to be effective. Even a simple, consistent process for collecting and routing feedback outperforms a sophisticated system that nobody uses.

A SaaS company running a dedicated feedback portal noticed that roughly 40 percent of incoming feature requests centered on one workflow: exporting reports. Individual requests used different language, but the underlying need was identical. By tagging and grouping that input, the team identified a high-priority gap they had previously treated as a handful of one-off requests. After shipping the export feature, they notified every user who had requested it, and churn in that segment dropped measurably the following quarter.

Another strong example comes from structured customer interview programs. A product team scheduled monthly 30-minute calls with users across different plan tiers and discovered that enterprise customers were solving a core workflow problem with a manual workaround the team had no idea existed. That single finding redirected two sprint cycles toward an automation feature that became one of their highest-rated additions of the year.

Starting a VoC program is easier when you have concrete starting points rather than a blank document. Use the templates below to build your collection and response processes without starting from scratch.

In-product survey questions:

Feature request response template:

Closing the loop notification template:

Now you have a clear answer to what is voice of customer and a practical framework for building a program that actually works. The process comes down to three connected actions: collecting feedback across the right channels, analyzing it for patterns rather than individual data points, and closing the loop so users know their input shaped what you built. Each step reinforces the others, and skipping any one of them weakens the whole system.

Your next move is to pick one collection channel you're not using today and put a real process behind it. Start with a feedback portal where users can submit ideas, vote on requests, and track progress without needing to email your support team or dig through a community forum. Koala Feedback is built exactly for this. Start collecting user feedback and turn what your customers are already telling you into a product roadmap that earns their trust.

Start today and have your feedback portal up and running in minutes.