Most SaaS teams collect feedback. Few actually do something meaningful with it. The difference between the two almost always comes down to how well you've structured your voice of customer best practices, the systems and habits that turn raw user input into product decisions that move the needle.

Without a clear VoC process, feedback piles up in spreadsheets, Slack threads, and support tickets. Features get built based on gut feeling or whoever talks the loudest. Users feel ignored, and churn follows. It's a pattern we've seen repeatedly at Koala Feedback, where we help SaaS teams centralize feedback collection, prioritize what matters, and close the loop with users through public roadmaps and voting boards.

This article breaks down six practices that separate high-performing VoC programs from the ones gathering dust. Each one is actionable, specific to SaaS, and designed to help you build what your users actually want, not what you assume they do. Let's get into it.

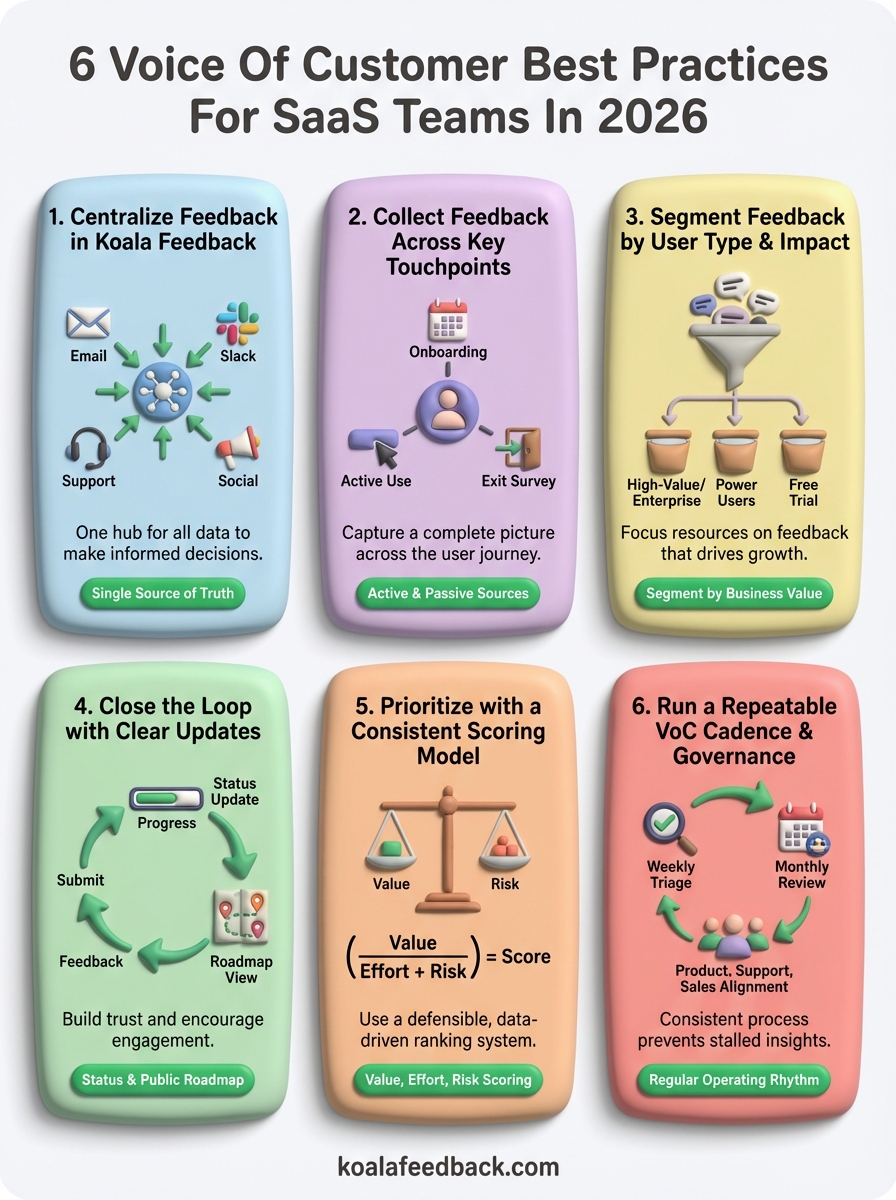

Scattered feedback is the root cause of most broken VoC programs. When your team pulls comments from email, Slack, support tickets, and sales calls separately, you lose context, miss patterns, and waste hours reconciling data that should already be in one place. Centralizing everything is the first and most critical of all voice of customer best practices.

When feedback lives in multiple places, product decisions rely on whoever shouts loudest or happens to be in the right meeting at the right time. Koala Feedback gives your team a single hub where every request, idea, and complaint lands, so decisions get made on actual data rather than noise.

A centralized feedback system is what separates teams that build with confidence from those that guess their way to the next release.

Start by mapping where feedback currently enters your organization: support tickets, in-app surveys, sales calls, social channels. Then route each source into Koala Feedback's feedback portal, either by sharing the link directly with users or embedding it in your product. Set up boards organized by product area so incoming requests fall into the right category from day one, rather than sitting in an unsorted pile waiting for someone to sort them later.

Duplicate requests are unavoidable with a large user base. Koala Feedback automatically identifies overlapping submissions and groups them together, so you see the true volume behind each theme instead of counting the same idea five separate times. Use tags and categories to label feedback by feature area, user type, or urgency. This setup takes minutes but saves hours every sprint when your team needs to assess what is worth prioritizing next.

Once feedback is centralized, track these numbers weekly:

Centralizing feedback only works if you're actually capturing it from the right places. Most SaaS teams over-index on one or two channels and miss the broader signal hiding in other touchpoints. Broadening your collection approach is a core voice of customer best practices principle that directly improves the quality of data you work with.

Users share feedback in different ways depending on where they are in their journey. A new user hits friction during onboarding in a very different context than a power user requesting an advanced feature. If you only survey one group or listen to one channel, you end up with a skewed picture of what your product actually needs.

Collecting feedback from one channel is like reading every other page of a book and wondering why the plot doesn't make sense.

Timing matters more than frequency. Trigger in-app prompts after specific actions, such as completing a workflow or canceling a subscription, rather than blasting users on login. Keep surveys short and contextual, ideally one to three questions maximum, and always link to your Koala Feedback portal for users who want to say more.

Active feedback means you asked for it: surveys, interviews, and feedback forms. Passive feedback arrives on its own: support tickets, reviews, and sales call notes. Pulling both into one system gives you a complete picture of user sentiment without gaps.

Not all feedback deserves equal weight. Treating every request the same wastes your team's capacity on low-value noise while high-impact needs sit waiting. Segmentation cuts through that problem by giving you a clear filter for deciding whose feedback shapes your roadmap and why.

A feature request from a free trial user carries different weight than the same request from your largest enterprise account. Segmenting by user type is one of the most underused voice of customer best practices, and it directly determines whether your roadmap reflects the users who actually drive your business growth.

Build for the users you want to keep, not just the ones who submit the most tickets.

Start with segments tied to business value: plan tier, account revenue, and user role. Then add behavioral segments like power users versus inactive accounts to separate engaged users from those already drifting toward churn. These two layers give your team context before the conversation even starts.

Tag every submission with at least two attributes: user segment and feature area. This pairing lets you filter quickly during sprint planning and spot patterns such as enterprise users requesting the same workflow fix repeatedly.

Keep your tag list tight, ideally under 15 options. A bloated taxonomy creates confusion and falls apart the moment your team disagrees on which label fits.

Check these numbers regularly to confirm segmentation is adding clarity rather than overhead.

Collecting and organizing feedback means nothing if users never hear back. Closing the loop is one of the most neglected voice of customer best practices, and skipping it directly determines whether users bother submitting feedback in the future.

Users who submit feedback and receive silence assume you ignored them. That silence erodes trust faster than most product bugs will. When you respond consistently, you signal that feedback drives real decisions, which keeps your most engaged users contributing.

Users who feel heard submit more feedback and churn less. The loop is not a courtesy; it is a retention mechanism.

You don't need to reply to every request individually, but acknowledging submissions within 24 to 48 hours sets a clear standard your team can actually hold. Use automated status updates in Koala Feedback to notify users the moment their request moves from submitted to under review or planned, so your team avoids sending manual emails for every change.

Customizable statuses in Koala Feedback let you map each request to a stage your users can understand: planned, in progress, or shipped. Pair this with a public roadmap that shows what your team is actively building. Users check roadmaps before they churn, and a visible one reduces support tickets asking when a feature is coming.

Track these two numbers to confirm your loop is actually working.

Without a repeatable scoring system, prioritization becomes a negotiation rather than a decision. Your loudest stakeholder wins, and the features that would actually move retention get buried under low-priority requests. Adding a consistent model is one of the voice of customer best practices that turns raw demand into a ranked, defensible list your team can act on.

Arbitrary prioritization wastes engineering time and frustrates the users who submitted their most critical needs. A scoring model removes the subjective back-and-forth by giving every request a comparable number based on the same criteria, so sprint planning moves faster and the rationale behind each decision is easy to explain.

Rate each request on three dimensions: business value (revenue impact, retention, and strategic fit), implementation effort (engineering time and complexity), and risk (what breaks if you skip it). Score each dimension from one to five, then divide value by the sum of effort and risk. Requests with high value and low friction rise to the top automatically.

A scoring model does not make the decision for you; it removes the bias from the conversation so the best options surface on their own.

Group high-scoring requests that share a common theme into a single roadmap bet. One bet covering three related requests delivers more user impact than three isolated fixes built without a connecting purpose.

A one-time feedback push does not build a strong VoC program. Without a regular operating rhythm, insights stall between reviews, stakeholders lose context, and the same conversations happen over and over. Governance turns voice of customer best practices from a project into a process your team runs consistently.

Ad hoc feedback reviews create gaps where critical signals go unnoticed for weeks. A regular cadence forces your team to engage with data consistently, which means problems surface earlier and roadmap decisions stay grounded in current user needs rather than memory.

Consistency is what separates teams that learn from their users every quarter from those that scramble to understand churn after it happens.

Run a weekly 30-minute feedback triage where one person reviews new submissions, applies tags, and flags anything urgent. Then hold a monthly cross-team review to assess trends, update roadmap statuses, and identify themes ready for scoring. That two-step cycle keeps the process manageable without letting insights pile up.

Shared access to your feedback boards removes the version-of-truth problem that derails alignment meetings. Assign each team a clear role: support tags incoming tickets, sales logs requests from calls, and product owns prioritization decisions. Everyone contributes to the same dataset so no team walks into planning with a different picture.

Track these two numbers to confirm your cadence is working.

The six voice of customer best practices in this article build on each other deliberately. Start by centralizing your feedback in one place, then expand your collection touchpoints, segment by user type, close the loop consistently, score with a repeatable model, and lock in a governance cadence.

You don't need to implement all six at once. Pick the practice that addresses your biggest current gap and build from there. Most teams see the fastest early wins by centralizing feedback first, since everything else depends on having clean, organized data in a single system before the other steps deliver value.

When you're ready to stop guessing and start building what your users actually need, try Koala Feedback to centralize your feedback, manage your public roadmap, and close the loop with users faster than your current setup allows.

Start today and have your feedback portal up and running in minutes.