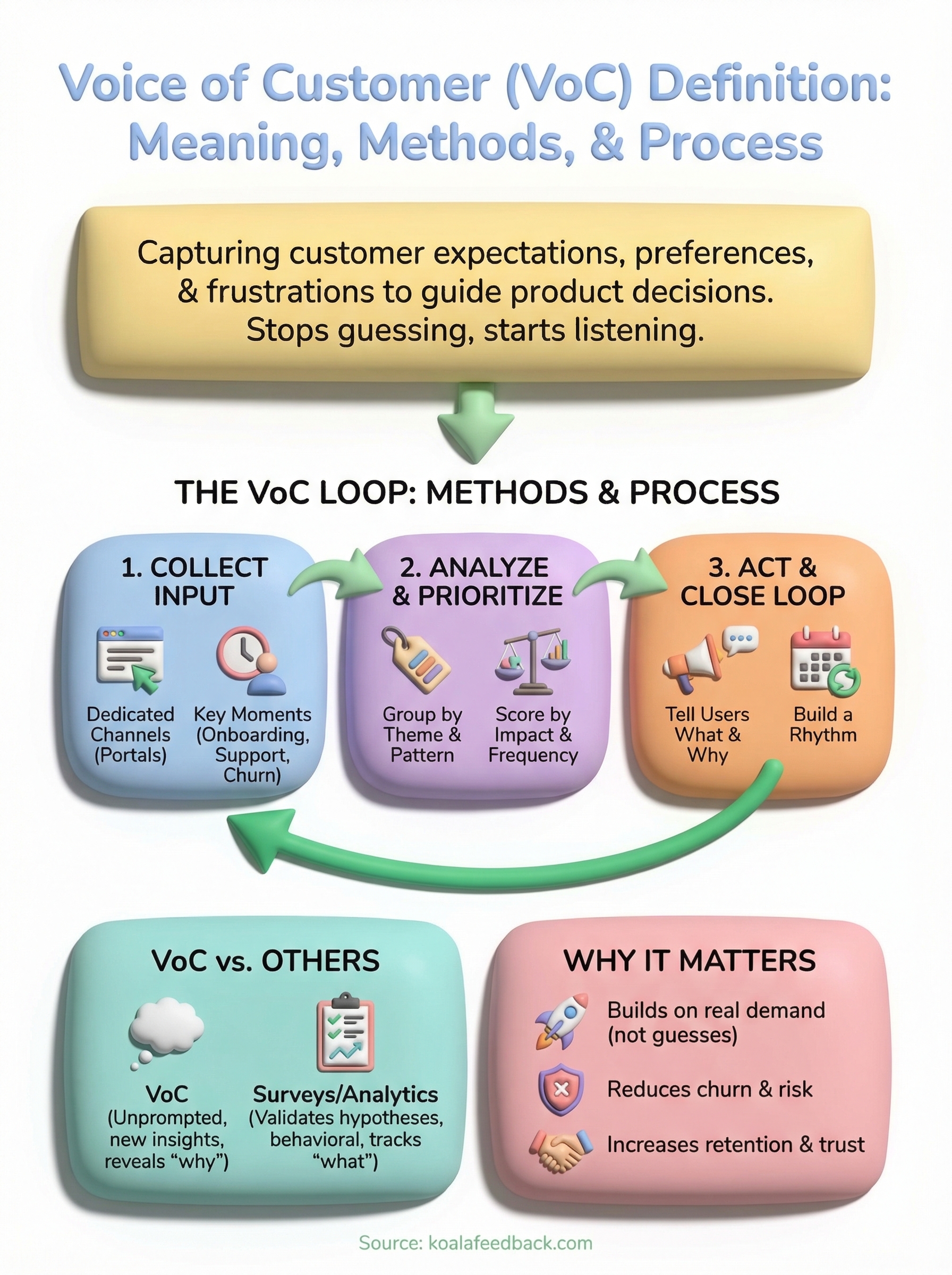

Every product decision you make is a bet. You're betting that users want what you're building. The voice of customer definition refers to the process of capturing customers' expectations, preferences, and frustrations, then using that input to guide what you build, fix, or improve. It's not a buzzword. It's a structured way to stop guessing and start listening.

When done right, VoC programs turn scattered opinions into a clear signal. They tell you which features matter most, where your product falls short, and what keeps users coming back. Without that signal, teams default to gut feelings and internal politics, and ship things nobody asked for. With it, you prioritize based on real demand, not assumptions.

That's exactly why we built Koala Feedback, to give product teams a single place to collect, organize, and act on user feedback. So this topic is close to home for us. In this article, you'll get a clear definition of Voice of the Customer, the methods used to capture it, practical examples, and a framework you can apply to your own product. Whether you're a product manager at a startup or part of a larger dev team, this guide will give you what you need to put VoC into practice.

Product teams today face constant pressure to ship fast and get it right. Most feature ideas come from inside the building, from executives, roadmap reviews, and internal priorities. That's not wrong, but it creates a blind spot. You end up optimizing for what you think users want rather than what they've actually asked for. That's where VoC programs earn their value: they replace assumption with evidence and gut feel with a real user signal.

Every feature you build without user validation carries a hidden cost. Development time, design effort, and engineering cycles go into something that might miss the mark entirely. Research consistently shows that a significant portion of new product features go largely unused after launch. When you skip the voice of customer definition stage, where you deliberately gather and interpret what users need, you're not just risking a bad feature. You're risking user churn, negative reviews, and a roadmap that drifts further from actual demand.

The earlier you surface what users actually need, the less expensive it becomes to build the right thing.

Users rarely complain loudly when a feature misses. Instead, they quietly stop using the product, downgrade their plan, or switch to a competitor. By the time churn shows up in your metrics, the damage is already done and reversing it takes far more effort than getting input upfront would have required.

When you have a structured way to collect and interpret user feedback, every product decision gets grounded in data. Feature prioritization becomes clearer because you can see which requests appear most often and from which user segments. You stop debating direction based on who speaks loudest in a planning meeting. Instead, you let the volume and pattern of real feedback guide where to invest next.

SaaS products face constantly shifting user needs, and a feature users ignored last year might be their top request today because of a market shift or a competitor move. Staying close to your users through consistent VoC practices means you catch those shifts early enough to act before they cost you accounts and revenue.

There's a human side to this that often gets overlooked. Users who submit feedback and see it acted on don't just stick around longer, they become advocates. They tell others about your product, leave stronger reviews, and engage more deeply with what you ship. That kind of loyalty is hard to manufacture through marketing alone. You build it by closing the loop: collecting input, making decisions based on it, and communicating what you did and why.

Teams that treat feedback as a one-way funnel, collecting it and then going quiet, miss that opportunity entirely. Transparency about your roadmap and decision-making signals to users that their voice actually matters. That signal differentiates your product in a crowded market where most companies collect feedback and do nothing visible with it.

Your VoC program isn't just a research method. It's an ongoing relationship between your product team and the people who use what you build. Invest in that relationship consistently, and the returns show up in retention, referrals, and a roadmap that maps to what your market actually needs.

People often treat surveys, analytics, and market research as interchangeable with VoC. They're not. Each tool gives you a different slice of reality, and understanding what each one does well, and where it falls short, helps you build a feedback system that actually works. The voice of customer definition centers on capturing direct, unprompted input from the people already using your product. That's different from measuring clicks or sending a structured questionnaire where you've already decided what to ask.

Surveys are useful for confirming a hypothesis you already have. You ask a specific set of questions, users answer them, and you analyze the results. The problem is that surveys only surface what you thought to ask about. If users have a pain point that didn't occur to your team, it never shows up in the data because there was no field for it.

VoC programs are designed to surface what users think matters, not just validate what you already assumed.

This is where VoC stands apart. When users submit feedback through an open channel like a feedback portal or a feature request board, they tell you what's on their mind without being guided by your framing. That unprompted input often contains the most valuable signal: complaints you didn't expect, use cases you didn't anticipate, and requests that reveal product-market gaps you weren't tracking.

Product analytics tell you what users do inside your product. They show you where users drop off, which features get used, and how often users return. That behavioral data is valuable, but it doesn't explain motivation. A user who stops using a feature might be confused, might find it unreliable, or might have found a workaround elsewhere. Analytics alone won't tell you which one is true.

Market research takes a different angle. It focuses on the broader competitive landscape, buyer personas, and purchasing behavior, which helps you understand the market context your product sits in. What it doesn't give you is granular insight into what your current users need next or why specific parts of your product frustrate them.

Used together, all three methods strengthen your VoC program. Analytics show you where to look. Market research tells you where the market is heading. And direct customer voice tells you exactly what your users need you to fix or build.

Collecting VoC data isn't about sending one survey at the end of the quarter. Your users interact with your product across multiple moments, and each touchpoint offers a different kind of signal. The goal is to create a system that captures input continuously, not just when you think to ask. When you apply the voice of customer definition practically, it means setting up channels and triggers that pull feedback in automatically across the full customer experience.

The most direct way to collect VoC data is to give users a permanent place to submit feedback whenever they want. A public feedback portal lets users submit ideas, report frustrations, and vote on what others have suggested, without you having to prompt them at all. This kind of channel captures unprompted input, which tends to be more honest and specific than responses to structured surveys.

The best feedback arrives when users have a reason to share it, not when you've scheduled a research cycle.

Voting and threading on submitted ideas also help you see which requests resonate across your user base. When ten users independently submit the same pain point and thirty more upvote it, you have evidence, not just an opinion. That's the kind of signal that justifies reprioritizing a sprint.

Timing matters as much as the channel. Feedback collected right after a meaningful action, like completing onboarding, submitting a support ticket, or canceling a subscription, carries more context than feedback collected at random. Users can tell you exactly what worked, what confused them, or what drove them away because the experience is still fresh.

Here are four high-value moments to target for VoC collection:

Each of these moments reveals a different layer of the user experience. Onboarding feedback surfaces friction in your first impression. Churn feedback tells you what your product failed to deliver. Together, they give you a complete picture of where users struggle and where your product has room to improve.

Collecting feedback is only half the work. Once data flows in from multiple channels, the real challenge is making sense of it and turning raw input into clear decisions. A solid voice of customer definition includes not just gathering customer input but systematically analyzing and ranking it so your team knows exactly where to focus next. Without a structured process for this step, feedback piles up, patterns stay invisible, and nothing actually changes on your roadmap.

When feedback arrives in volume, your first job is to organize it into categories that reflect real patterns. Tag submissions by product area, user type, or request type so you can spot clusters quickly. When multiple users describe the same friction in different words, those variations all point to the same underlying problem, and grouping them reveals just how common that problem really is across your user base.

The goal of categorization isn't to file feedback away. It's to turn individual opinions into visible patterns you can act on.

A feedback portal with automatic deduplication saves significant time here. Instead of manually sorting through hundreds of similar requests, you can see aggregated themes immediately and focus your analysis on the signals that surface most often, rather than getting buried in noise.

Not all feedback deserves the same priority. Once you've grouped your data, evaluate each theme against two dimensions: how often the issue appears and how much impact resolving it would have on user experience or retention. A request that shows up from five enterprise users might outweigh one that appears fifty times from free-tier accounts, depending on your business goals and where your revenue comes from.

You can structure this evaluation using a simple scoring framework:

| Criteria | Weight |

|---|---|

| Frequency of requests | High |

| Segment value (revenue, churn risk) | High |

| Effort required to build | Medium |

| Alignment with product strategy | Medium |

Voting data from your feedback portal gives you a head start on frequency scoring because users have already ranked requests against each other organically. Pair that with segment data from your CRM or billing tool, and you get a priority list grounded in both user demand and business impact. That combination makes it far easier to defend roadmap decisions to stakeholders and to ship things that actually move your retention numbers in a meaningful direction.

Collecting and analyzing feedback creates momentum, but that momentum dies if nothing visibly changes. Closing the loop means taking action on what you've learned and then communicating that action back to the users who gave you input in the first place. This final step is what separates a functional voice of customer definition from a feedback inbox nobody checks. When users see that their input shaped your product, they trust your process and engage more deeply with what you ship next.

Most teams announce new features without connecting them to user feedback. That's a missed opportunity. When you explicitly tie a release to a feature request, you validate the users who asked for it and signal to everyone else that submitting feedback is worth their time. A simple status update on your public roadmap, a changelog note, or even a direct reply to a feedback thread can close that loop effectively.

You don't need a long explanation. Telling users "we built this because you asked for it" is enough to build lasting trust.

Transparency about decisions you didn't make matters just as much. If a frequently requested feature isn't on your roadmap, say so and explain the tradeoff. Users handle "we considered it and here's why it's not the right move now" far better than silence. That honesty keeps them engaged instead of frustrated and wondering whether anyone read their submission.

Closing the loop can't be a one-time event. Your product evolves continuously, so your process for reviewing, acting on, and communicating feedback needs to follow the same cadence. Build a regular review cycle into your sprint planning or quarterly roadmap process so feedback informs decisions on a schedule rather than when someone remembers to look.

Here's a simple rhythm you can follow:

Consistency in this process compounds over time. The longer you maintain it, the richer your feedback data becomes and the sharper your prioritization gets. Users who see your product improving in response to their input don't just renew their subscriptions. They refer others, write stronger reviews, and give you the kind of word-of-mouth growth that no marketing budget can replicate.

The voice of customer definition you've worked through in this article is a foundation, not a finish line. You now have a clear picture of what VoC means, why it matters, how to collect it across touchpoints, and how to turn raw feedback into decisions that improve retention and drive growth. The gap between teams that ship what users need and teams that guess is almost always a gap in how consistently they listen.

Your next move is to set up the infrastructure that makes listening automatic. Give your users a permanent channel to submit feedback, vote on what matters most, and see your product roadmap evolve in response to their input. That's exactly what Koala Feedback is built to do. You can centralize feedback, track themes, and close the loop with a public roadmap, all in one place. Start building that habit now, and your roadmap decisions will get sharper every quarter.

Start today and have your feedback portal up and running in minutes.