Every product team collects feedback in some form, support tickets, survey responses, Slack messages from sales, feature requests buried in email threads. But collecting feedback isn't the same as acting on it. A product feedback loop is the structured process that turns raw user input into actual product improvements, then circles back to users so they know they've been heard. Without that loop, feedback goes into a void, and users stop giving it.

The concept sounds straightforward, but execution is where most teams stumble. Feedback gets scattered across tools, priorities shift without clear data, and users never learn what happened to their suggestions. That's exactly the problem we built Koala Feedback to solve: a single place to collect, organize, prioritize, and communicate feedback so the loop actually closes.

This article breaks down how a product feedback loop works step by step, why it matters for retention and product decisions, and how to build one that doesn't fall apart after the first sprint. Whether you're setting up your first formal feedback process or tightening an existing one, you'll walk away with a clear framework to follow.

A product feedback loop isn't just a nice operational habit. It directly shapes whether users keep using your product, whether your team builds the right features, and whether you spend engineering time on work that actually moves the needle. Most product teams underestimate the compounding effect of running this process consistently, and they pay for it through churn, misaligned roadmaps, and wasted sprints.

When someone submits feedback and never hears back, they draw a clear conclusion: their input doesn't matter. That conclusion drives churn just as effectively as a broken feature does. Users who engage with your product enough to submit ideas are your most invested users, and losing them hurts more than losing passive ones.

The fastest way to kill your feedback channel is to let it go silent after users submit their input.

You don't have to act on every request. But you do need to close the loop, even if your answer is "we reviewed this and it's not on our roadmap right now." Users respect honesty. What they can't tolerate is silence. When you communicate your decisions, including the "no" ones, users trust your team more and stay engaged with your product direction over the long term.

Building based on gut instinct or the loudest voice in the room is expensive. A well-run feedback process gives your team real data on what users need, ranked by frequency and impact rather than by who happened to be in the last sales call. That shift changes how you plan work, write specs, and decide what to cut from the backlog.

Product teams that track feedback systematically also catch friction points earlier. When multiple users report the same workflow problem, that pattern surfaces before it turns into a support escalation or a churn spike. You stop reacting to fires and start identifying problems while they're still small and fixable.

Product managers, designers, engineers, and customer success teams often operate with different pictures of what users actually want. A centralized feedback process fixes that disconnect. When everyone pulls from the same organized pool of user input, debates about what to build next get shorter and more grounded in evidence rather than opinion.

Your sales team stops pushing for one-off custom features once they can see broader demand patterns and point prospects to a public roadmap instead. Your engineers spend less time in planning meetings defending why a feature got deprioritized, because the data explains it. Alignment stops being something you have to force and becomes a natural output of the process you already run.

Taken together, these benefits compound quickly. A team that closes the loop consistently builds a stronger product, retains more users, and makes faster decisions with less internal friction. A team that skips it keeps rebuilding trust it never fully earns.

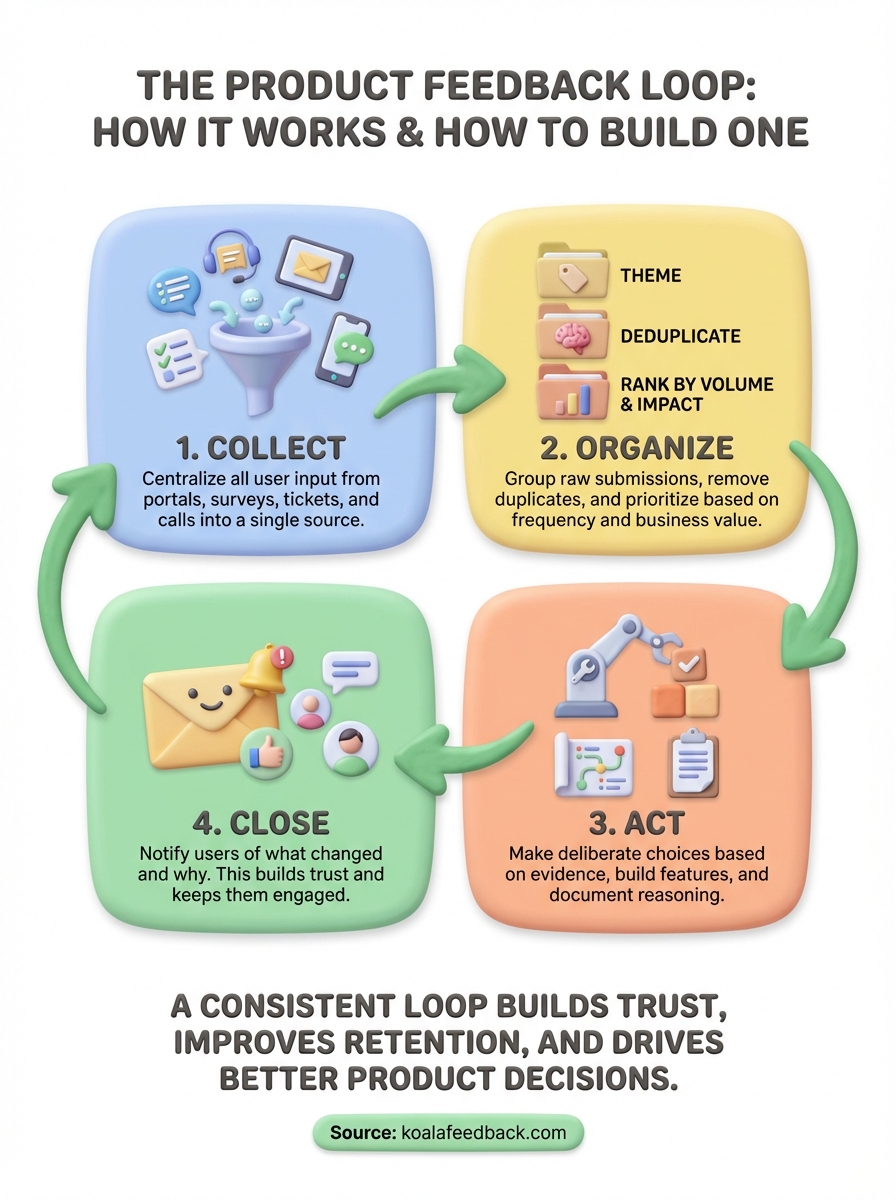

Every product feedback loop runs through four stages: collect, organize, act, and close. These stages work in sequence, and each one depends on the previous. Skip a stage or let one get sloppy, and the whole loop breaks down. Understanding what happens at each stage helps you spot where your current process leaks and where to focus your effort first.

| Stage | What happens |

|---|---|

| Collect | Users submit feedback through portals, surveys, support tickets, or sales calls |

| Organize | Feedback gets categorized, deduplicated, and ranked by volume and impact |

| Act | Your team builds, adjusts, or deprioritizes based on the organized input |

| Close | You notify users of what changed and why, then restart the cycle |

Collecting feedback means setting up consistent channels so users can submit input without friction. The channel matters less than the consistency: a single feedback portal works better than five scattered tools because all input lands in one place where your team can actually review it. Without a central collection point, feedback gets lost across inboxes and Slack threads before anyone acts on it.

Organizing that input is where most teams lose time. Raw submissions need to be grouped by theme, deduplicated, and ranked so your team isn't reviewing the same request fifteen times in different forms. When you organize well, patterns emerge fast: the top five requests by volume usually tell you more about product direction than any quarterly survey.

Once you've organized your input, your team makes prioritization decisions based on what users need most, what aligns with your product strategy, and what's technically feasible. Acting doesn't mean building every request. It means making deliberate choices with real evidence, then documenting why you chose what you chose.

Closing the loop is what separates a real feedback process from a suggestion box that nobody reads.

Closing means going back to the users who submitted feedback and telling them what happened. You ship a feature and notify the people who asked for it. You pass on a request and explain your reasoning. That final step is what builds the trust that keeps users engaged in the next cycle.

Building a product feedback loop that holds up over time doesn't require complex tooling or a large team. What it requires is a clear sequence of steps that everyone on your team follows consistently. Setting up the process deliberately from the start saves you from patching gaps later when feedback volume grows and things get harder to manage.

Your first step is to pick one primary place where feedback lands. That might be a dedicated feedback portal, an in-app prompt, or a lightweight form linked from your product. The specific tool matters less than the principle: all submissions go to the same location so nothing gets lost across email threads, Slack channels, or scattered spreadsheets.

Fragmented collection is the most common reason feedback loops break down before they start.

Once you have a single channel in place, make it easy to find. Link to it from your product dashboard, your onboarding emails, and your support pages. The lower the friction, the more input you receive from users who actually engage with your product day to day.

After you have submissions coming in, you need a consistent review schedule. That could be weekly for an early-stage team or bi-weekly once volume stabilizes. During each review, group similar requests together, mark duplicates, and assign each item a rough category tied to your product areas.

This routine keeps your backlog from turning into a wall of unstructured text that nobody wants to sort through. When feedback is grouped and labeled, patterns surface quickly and prioritization decisions get faster and easier to defend.

Every step in your process needs a named owner, not a team or a department. Someone owns collection setup, someone owns the weekly review, and someone owns communicating decisions back to users. When ownership is clear, steps don't get skipped because everyone assumed someone else handled it.

Documenting these assignments somewhere shared, even a simple table in your team wiki, removes ambiguity when someone is out or a new team member joins. A written process is a process that actually runs.

Once your collection and review routine is in place, the next challenge is deciding what to build first. A healthy product feedback loop requires a clear method for turning organized input into a ranked list of work, not just a pile of categorized requests with no clear order.

Frequency is a strong signal, but it's not the only one. A request that shows up fifty times from free users carries different weight than the same request from ten enterprise accounts that drive most of your revenue. When you rank requests, layer business impact on top of raw volume so the items that rise to the top reflect both user demand and strategic value.

A feature that ten high-value customers ask for often outranks one that fifty low-engagement users mention once.

Start with a simple scoring approach: assign each request a volume score based on the number of unique users who raised it, then add an impact score based on the user segment, revenue influence, or alignment with your current product goals. That combination gives your team a defensible ranking that's easy to explain in planning meetings.

After you ship a feature or fix, notify the users who asked for it. This step takes minutes but delivers outsized results. Users who receive a direct update that their request shipped are far more likely to stay engaged, test the new feature, and submit future feedback. Silence after a release wastes the goodwill you earned by building the thing.

Keep your release notes short and specific. Tell users what changed, link to where they can try it, and thank them for the input that shaped the decision. That message doesn't need to be long to be effective.

Shipping a feature doesn't close the loop permanently. You need to track whether the change actually solved the problem users raised. Monitor adoption rates for new features, watch support ticket volume for related issues, and check whether churn patterns shift among the segments that requested the work. If the numbers don't move, the feedback loop prompts you to dig deeper rather than assume the job is done.

Seeing how a product feedback loop works in practice makes it easier to apply the concepts to your own process. The two examples below reflect realistic scenarios for SaaS teams, and the template at the end gives you a starting point you can adapt without building from scratch.

A small SaaS team with around 500 active users sets up a public feedback portal and links to it from their product dashboard and onboarding emails. Users submit feature requests throughout the month. At the end of each month, the product manager reviews all new submissions, groups similar requests by theme, and scores each group using a simple formula: number of unique requesters multiplied by a segment weight based on plan type.

Scoring by segment weight keeps enterprise requests from getting buried under a flood of free-tier submissions that don't reflect your revenue base.

The top three items by score move into the next sprint planning session for review. After each release, the product manager sends a direct update to every user who submitted or upvoted the relevant request. Users who never receive a response gradually stop submitting. Users who receive updates tend to submit again.

When you ship a feature that came from user input, a short direct message does more than a generic changelog post. Here's a reusable template your team can adapt:

Subject: [Feature name] is live, and it started with your feedback

Hi [Name],

You submitted a request for [brief description of the feature]. We built it. [Feature name] is now live, and you can find it at [location in product].

Your input shaped this decision directly. If you run into questions or have follow-up thoughts, reply here or visit [feedback portal link].

Thanks for taking the time to share what you needed.

[Your name]

This message takes under two minutes to send and closes the loop in a way users remember. Keep it short and specific, and send it as close to the release date as possible.

A product feedback loop works when all four stages run in sequence: collect, organize, act, and close. Skip any one of them and the process breaks down. Users stop submitting input, your team loses signal, and product decisions drift back toward guesswork instead of data.

The steps in this article give you a complete framework to follow. Set up a single collection point, build a consistent review routine, assign clear ownership, rank requests by volume and impact, ship updates, and tell users what changed. Each step reinforces the next, and the whole system grows stronger the longer you run it consistently.

Starting this process doesn't require months of setup or a large team. You can get the basics running this week. Start your product feedback loop with Koala Feedback and give your users a clear place to submit input while giving your team the structure to act on it every sprint.

Start today and have your feedback portal up and running in minutes.