Your users are telling you exactly what they want. The question is: are you listening in a way that actually moves the needle? A solid feedback management process transforms scattered opinions into a structured system that drives real product decisions. Without it, you're guessing, and guessing rarely builds products people love.

This guide breaks down the complete framework for collecting, organizing, and acting on customer feedback. You'll learn how to capture feedback from multiple channels, prioritize what matters most, and close the loop with users so they know their voice counts. Each step builds on the last, giving you a repeatable system instead of ad-hoc chaos.

At Koala Feedback, we've built our entire platform around these principles, helping product teams centralize feedback, prioritize features based on real demand, and share transparent roadmaps. Whether you're using a dedicated tool or starting from scratch, this framework will show you exactly how to turn user input into your competitive advantage.

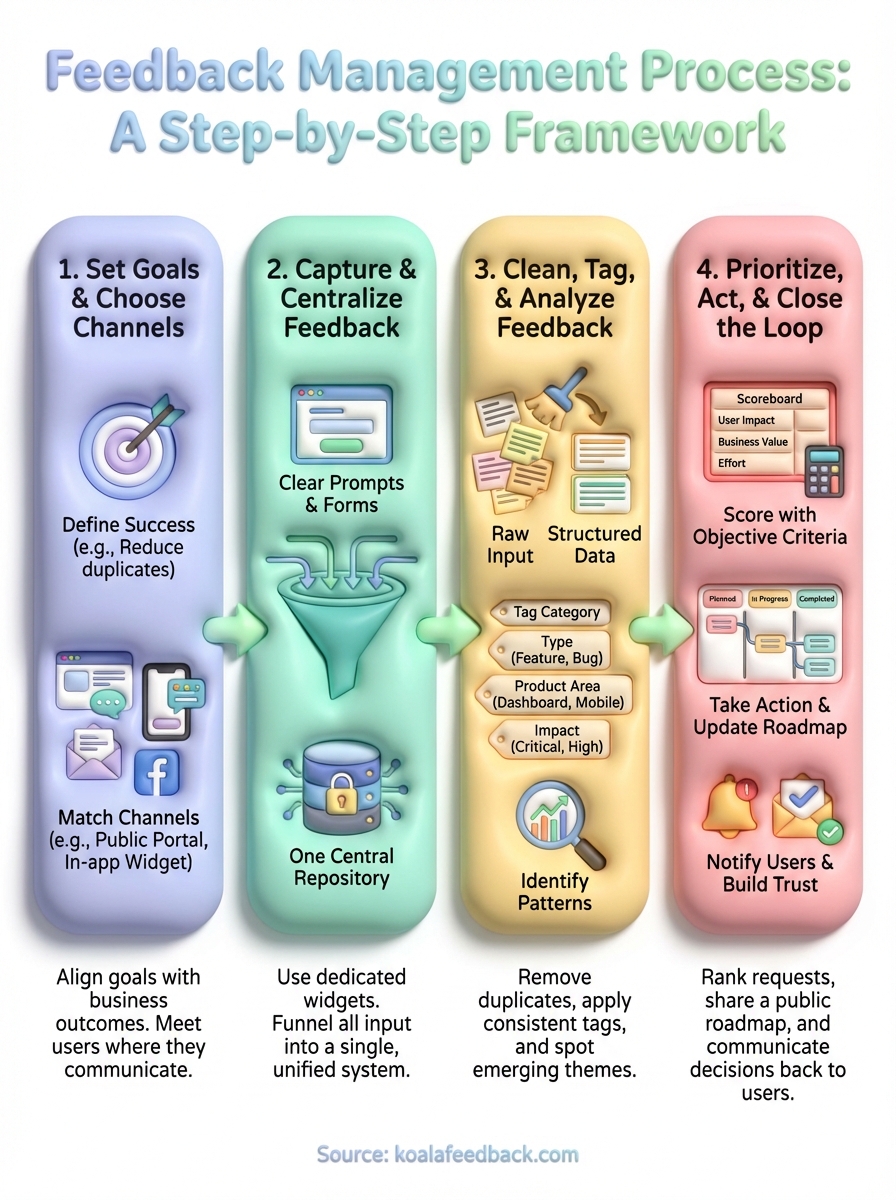

A complete feedback management process covers four connected stages: collection, organization, action, and communication. You need a system that handles each stage deliberately, not just a scattered approach where feedback sits in different tools with no clear path forward. Think of it as a pipeline that transforms raw input into product improvements your users can see and feel.

Your process starts with collection across multiple touchpoints. This means capturing feedback from support tickets, in-app widgets, surveys, social media, sales calls, and anywhere else users share their thoughts. Each channel gives you different types of insights, from feature requests to bug reports to usability friction points.

Next comes organization and analysis. You consolidate feedback into a central location, remove duplicates, tag submissions by theme or feature area, and identify patterns. This stage answers questions like: What are users asking for most? Which problems impact the largest segment? Where's the biggest gap between expectations and reality?

The third stage is prioritization and action. You decide which feedback to address based on business impact, effort required, strategic alignment, and user demand. Then you actually build, fix, or improve something. Without this stage, you're just collecting data that goes nowhere.

Finally, you close the loop by communicating back to users. This means updating them on what you built, explaining decisions, and showing that their input created real change. Closing the loop transforms feedback from a one-way street into a conversation that builds trust.

A feedback management process only works when every stage connects to the next, turning user input into visible product evolution.

You need dedicated channels where users can easily submit feedback. These might include a public feedback portal, email addresses, in-app widgets, or embedded forms. The key is making submission frictionless and obvious, not buried three clicks deep in your help documentation.

Centralized storage comes next. Whether you use a specialized feedback tool or adapt existing systems, you need one place where all feedback lives. Spreadsheets can work for early-stage companies, but they break down fast as volume grows. Your storage system should let you tag, search, and filter submissions without manual gymnastics.

You also need clear ownership and workflows. Someone on your team must be responsible for reviewing feedback regularly, routing items to the right people, and keeping the pipeline moving. Define workflows for common scenarios: What happens when someone submits a feature request? Who reviews it? How does it get from "submitted" to "prioritized" to "built"?

A feedback management process runs continuously, not as a quarterly exercise you dust off when convenient. You're constantly collecting new input, analyzing emerging patterns, and adjusting priorities based on what you learn. This ongoing rhythm ensures you stay aligned with evolving user needs instead of building from outdated assumptions.

The process itself should improve over time. You'll discover which channels generate the most actionable feedback, which questions surface the best insights, and how to communicate decisions more effectively. Treat your feedback system like a product within your product, iterating based on what works and what creates friction. When users see their feedback consistently leading to real changes, they'll engage more deeply, giving you even better signal to work with.

Your feedback management process starts with clarity about what you're trying to achieve and where you'll gather input. Without defined goals, you'll collect feedback aimlessly, chasing every suggestion without strategic direction. Channel selection matters just as much because your users live in different spaces, and you need to meet them where they already communicate.

You need specific, measurable goals that guide your entire feedback management process. These goals should tie directly to business outcomes and product improvements you want to drive. For example, you might aim to reduce feature request duplicates by 40%, increase user engagement in feedback submissions by 25%, or cut the average time from feedback collection to implementation decision from two weeks to three days.

Write down your goals using clear metrics. Ask yourself: What percentage of users should submit feedback monthly? How many feature requests should you implement per quarter? What response time makes users feel heard? Goals like "improve product quality" are too vague. Goals like "implement the top 3 user-requested features each quarter based on voting data" give you something concrete to work toward.

Clear goals transform feedback collection from a feel-good exercise into a strategic advantage that drives measurable product improvement.

Choose feedback channels based on where your users already spend time and how they prefer to communicate. B2B SaaS users might expect a dedicated feedback portal or in-app widget, while consumer app users might respond better to quick surveys or social media listening. Technical audiences often appreciate public roadmaps where they can vote on features, while less technical users might prefer simple email forms.

Start with three to five channels maximum. You might combine:

Each channel serves a different purpose. Surveys capture structured data at scale. Support tickets surface urgent problems. User interviews reveal the "why" behind requests. Social listening catches sentiment you wouldn't see in formal channels. The key is balancing coverage with your team's capacity to actually monitor and process submissions from each source.

You need capture mechanisms in place across every touchpoint where users interact with your product, and you need one central location where all that feedback lands. This step transforms scattered input from emails, support tickets, social mentions, and direct conversations into a unified system you can actually manage. Without centralization, your feedback management process falls apart because your team wastes time hunting through different tools instead of analyzing patterns.

Install feedback widgets directly in your product where users experience friction or complete key actions. Place a feedback button in your navigation menu, on error pages, and at the end of important workflows. Each capture point should ask specific questions that drive actionable responses, not vague prompts like "How are we doing?"

Structure your feedback forms with these elements:

Keep forms short. You'll get higher submission rates with three fields than with ten. Users who want to elaborate will expand on their idea naturally, while those with quick suggestions can submit in seconds. Test your forms by submitting feedback yourself and timing how long it takes.

The easier you make feedback submission, the more signal you'll collect from users who would otherwise stay silent.

Create a centralized repository where feedback from all channels automatically flows together. This might be a dedicated feedback management tool, a customized database, or even a structured spreadsheet for early-stage products. The critical requirement is that support tickets, email submissions, widget responses, and manually logged conversations all end up in the same place with consistent formatting.

Set up integrations or workflows that route feedback automatically. When someone emails [email protected], that message should create an entry in your central system. Support ticket systems should tag feedback-related tickets and sync them to your repository. Social media mentions require manual logging, but you can batch process these weekly instead of trying to capture every tweet in real time.

Assign one person on your team to own this consolidation process. They verify new submissions appear correctly, catch duplicates early, and ensure nothing slips through cracks between systems. Centralization only works when someone monitors the pipeline.

Raw feedback arrives messy. Users submit duplicate requests with different wording, include unclear descriptions, and mix multiple issues into single submissions. This step transforms that chaos into structured data you can actually work with. You'll deduplicate entries, apply consistent tags, and surface the patterns that reveal what your users truly need from your product.

Start by identifying feedback that describes the same request with different words. Five users might ask for "dark mode," "night theme," "dark color scheme," "black background option," and "low-light interface" when they all want the same feature. Search your centralized system for similar keywords, read through recent submissions, and merge duplicates into a single master entry that tracks all related requests.

Create standardized titles for common requests. Instead of keeping user-submitted variations, rename entries to match your internal terminology. This makes searching faster and helps you spot related feedback instantly. Track the original submission count so you know how many users requested each feature even after consolidation.

Apply tags that categorize feedback by type, feature area, and priority level. Your tagging system should grow with your product but start simple with categories users and team members both understand. Build your taxonomy around how your product is organized, not abstract concepts that mean different things to different people.

Use this tagging framework as a starting point:

| Tag Category | Example Tags |

|---|---|

| Type | Feature request, Bug, Usability, Performance, Documentation |

| Product Area | Dashboard, Reports, Integrations, Mobile app, Settings |

| User Segment | Free tier, Paid, Enterprise, Trial |

| Impact | Critical, High, Medium, Low |

Tag each submission with at least one label from the Type and Product Area categories. Additional tags help you filter and segment feedback later when prioritizing what to build next.

A well-tagged feedback management process turns thousands of submissions into queryable data you can slice by any dimension that matters.

Run weekly reviews where you filter feedback by tag combinations and look for emerging themes. Sort by submission date to catch new pain points before they escalate. Sort by vote count or request frequency to identify what users want most. Look for feedback that appears across multiple user segments since these requests typically signal broader impact.

Calculate metrics like requests per week by category, average time between duplicate submissions, and percentage of feedback addressing bugs versus features. These numbers reveal whether you're collecting mostly complaints or forward-looking suggestions, and they help you spot quality shifts that indicate changing user needs.

You've collected and analyzed feedback, but none of that matters if you don't prioritize what to build and tell users what you decided. This step separates effective feedback management process systems from ones that collect dust. You'll rank requests based on objective criteria, take action on what matters most, and communicate decisions back to everyone who contributed input.

Create a scoring framework that removes gut feelings from prioritization decisions. Assign numerical values to each feedback item based on factors like impact, effort, strategic alignment, and user demand. This approach turns subjective discussions into data-driven choices your team can defend.

Use this scoring template to evaluate each request:

| Criteria | Score (1-5) | Weight | Weighted Score |

|---|---|---|---|

| User Impact (How many users benefit?) | 3x | ||

| Business Value (Revenue/retention impact) | 2x | ||

| Strategic Fit (Aligns with roadmap?) | 2x | ||

| Implementation Effort (5=easy, 1=hard) | 1x | ||

| User Votes (Relative to average) | 2x |

Calculate the total weighted score for each item, then rank your backlog accordingly. Items scoring above 30 typically warrant immediate consideration, while those below 15 might wait for future releases.

Objective scoring frameworks eliminate the loudest voice in the room problem, ensuring you build what truly matters to your user base.

Move high-priority items into your development pipeline with clear status labels like "Planned," "In Progress," or "Completed." Assign each item to a team member and set realistic timelines. Your feedback management process breaks down when submissions sit in "under review" limbo for months without updates.

Reflect these decisions on a public roadmap that shows users what you're building and when. Link roadmap items back to the original feedback submissions so users see their specific requests moving through your pipeline. Update statuses weekly so your roadmap stays accurate.

Send direct notifications to everyone who requested or voted for features you decide to build. Tell them why you prioritized their suggestion and when they can expect it. For requests you decline, explain your reasoning honestly instead of leaving users wondering why you ignored their input.

Automate these notifications when possible. When you move an item to "Completed" status, trigger emails to all users who engaged with that request. Include release notes, screenshots, or video demos showing the finished implementation. This closes the feedback loop and proves that user input drives real product evolution.

Your feedback management process only delivers results when all four steps work as a connected system. You collect feedback from multiple channels, centralize it in one location, clean and analyze submissions to spot patterns, then prioritize what to build and close the loop with users. Each stage feeds the next, creating a cycle that continuously improves your product based on real user needs.

The framework works whether you're managing ten feedback submissions per month or ten thousand. Start with clear goals, choose channels where your users already communicate, and commit to regular review cadences. Tools like Koala Feedback help you automate collection, voting, and roadmap sharing so you spend less time organizing and more time building what matters.

Your users are already telling you what they need. The question is whether you're ready to listen systematically and act decisively on what they share.

Start today and have your feedback portal up and running in minutes.