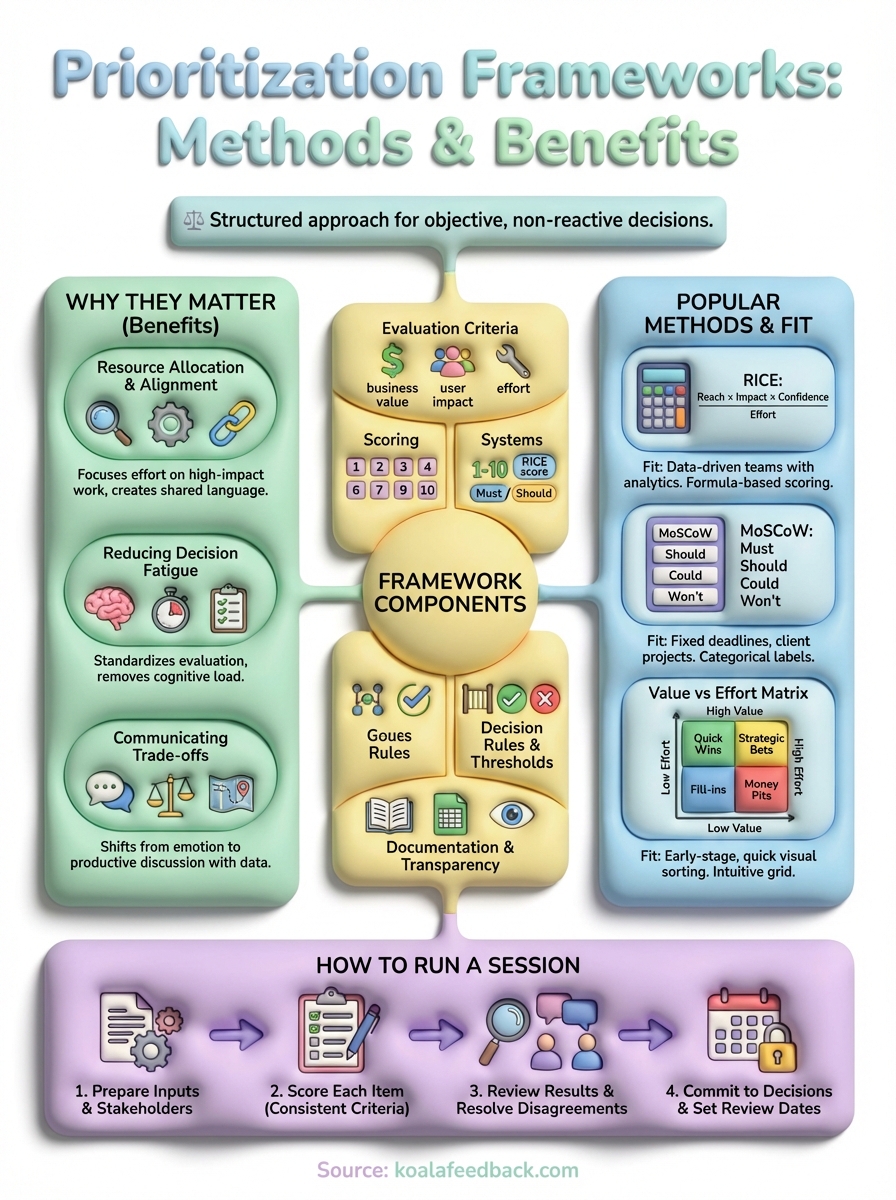

Every product team faces the same challenge: a backlog full of feature requests, bug fixes, and improvement ideas, but limited time and resources to tackle them all. Without a clear system to decide what gets built first, teams often default to whoever shouts loudest or whatever feels urgent in the moment. Understanding what is a prioritization framework gives you a structured approach to make these decisions objectively rather than reactively.

A prioritization framework helps you evaluate competing demands against consistent criteria, whether that's business impact, customer value, effort required, or strategic alignment. The result? Fewer wasted resources on low-impact work and a clearer path toward features that actually move the needle. For product managers and SaaS teams, this isn't just about organization; it's about building what matters most to your users and your business.

This article breaks down the most widely-used prioritization methods, including RICE, MoSCoW, and the Eisenhower Matrix, with practical examples to help you choose the right one. At Koala Feedback, we've built our platform around this exact principle: collecting user feedback and turning it into prioritized action. When you combine a solid framework with real input from your users, you stop guessing and start building with confidence.

Without a structured approach, product decisions often become a tug-of-war between competing interests. Your sales team wants features that close deals, your support team needs bug fixes that reduce tickets, and your engineering team has technical debt they've been begging to address. Meanwhile, your CEO heard about a trendy new feature at a conference and wants it yesterday. When you lack a consistent system for evaluating these demands, you end up making reactive choices that satisfy the loudest voice rather than the most valuable opportunity.

Your development capacity is your most expensive and constrained resource. Every feature you build represents an opportunity cost: the features you didn't build. A prioritization framework forces you to quantify the value of each option against your goals, whether that's revenue growth, user retention, or market differentiation. Instead of spreading your team thin across dozens of half-baked initiatives, you concentrate effort on the work that delivers measurable impact.

Frameworks also create alignment across departments because everyone evaluates opportunities using the same criteria. Your marketing team can't argue that their feature request is more important simply because they asked first. Product managers can defend their roadmap decisions with objective data rather than gut feeling. This shared language reduces internal conflict and helps your entire organization move in one direction instead of pulling against each other.

When everyone uses the same scoring system to evaluate feature requests, stakeholders understand why certain work gets prioritized over their pet projects.

Product managers face dozens of prioritization decisions every week. Which bug fix gets addressed first? Should you invest in a new feature or improve an existing one? Does this customer request align with your product vision? Without a framework, each decision requires fresh analysis and drains your mental energy. You start relying on shortcuts like recency bias (prioritizing whatever was requested most recently) or authority bias (building whatever the highest-paid person suggests).

A well-designed framework removes this cognitive load by standardizing your evaluation process. You score each item once using defined criteria, then your prioritization largely takes care of itself. This doesn't mean you stop thinking critically; it means you reserve your judgment for edge cases and strategic questions rather than reinventing the wheel for every backlog item. Understanding what is a prioritization framework helps you see it's not about removing human judgment but about applying that judgment consistently across all opportunities.

Perhaps the most underrated benefit of using a framework is how it transforms your stakeholder conversations. Instead of saying "we don't have time for that right now," you can show exactly where someone's request falls in your prioritization matrix. You demonstrate that their feature scored a 12 out of 100 while the top three items scored above 80. This shifts the conversation from emotional appeals to productive discussions about how to increase the score or what they're willing to trade for faster delivery.

Frameworks also help you explain why you're not building obvious features. Maybe a feature has massive potential reach but requires six months of engineering work, making it score lower than three smaller features that collectively deliver more value. When stakeholders see the math, they understand the logic. You spend less time defending your roadmap and more time executing against it. This transparency builds trust because people recognize you're making thoughtful decisions based on consistent principles, not personal preference or political pressure.

At its core, a prioritization framework consists of defined criteria that you apply consistently to every item in your backlog. These criteria vary based on the framework you choose, but they all serve the same purpose: transforming subjective opinions into measurable scores. When someone asks what is a prioritization framework made of, the answer includes both the evaluation structure and the process for applying it.

Your framework needs specific factors to assess each opportunity. Common criteria include business value (revenue impact, strategic alignment, competitive advantage), user impact (number of users affected, severity of the problem solved), implementation cost (engineering time, technical complexity, dependencies), and confidence level (how certain you are about your estimates). The key is selecting criteria that align with your goals rather than copying someone else's scorecard.

Most frameworks assign numerical scores to each criterion. You might rate business value from 1 to 10, estimate effort in story points or weeks, and calculate a final priority score using a formula. Some frameworks like MoSCoW use categorical labels (Must Have, Should Have, Could Have, Won't Have) instead of numbers. Either approach works as long as you apply the same logic to every item you evaluate.

The best frameworks strike a balance between being simple enough for quick decisions and detailed enough to capture what actually matters to your business.

Beyond scoring, your framework needs explicit rules for how you act on those scores. If you're using RICE and a feature scores above 50, does it automatically get added to your next sprint? Do you review all items quarterly and re-prioritize based on changing business conditions? These decision rules prevent your framework from becoming just another spreadsheet that nobody follows.

Thresholds create accountability because everyone knows when something qualifies for immediate attention versus the long-term backlog. You might establish that critical bugs bypass the normal prioritization process, or that features requiring more than three months of development need executive approval. These guardrails ensure your framework guides daily decisions without requiring constant recalculation.

Your framework only works if people can reference and understand it. This means documenting your scoring criteria, sharing the rationale behind your chosen weights, and making scores visible to stakeholders. Many teams maintain a prioritization matrix or spreadsheet that anyone can access to see where their feature request ranks and why.

Transparency also includes communicating how often you revisit priorities. If you lock your roadmap for six months, stakeholders know when they'll get another chance to make their case. Documentation prevents the framework from becoming a black box where features disappear without explanation.

You need a prioritization framework when the number of opportunities exceeds your capacity to execute them all. This happens in nearly every product organization, but the urgency becomes critical at specific moments in your development cycle. Recognizing these trigger points helps you implement the right framework before decision-making becomes chaotic rather than strategic.

Roadmap planning sessions are the classic moment to apply a prioritization framework. You gather input from sales, marketing, customer success, and engineering, then face dozens of competing requests that all seem important. A framework transforms this overwhelming list into a ranked backlog where your top priorities become obvious. You score each potential feature, calculate your team's capacity, and commit to what actually fits in the quarter.

These planning cycles also give you a natural checkpoint to revisit your criteria. What mattered most last quarter might shift based on market changes, new competitor moves, or evolving business goals. You can adjust your framework's weights to reflect these strategic shifts before committing resources.

Without a framework during planning, you risk filling your roadmap with projects that feel urgent but deliver minimal impact.

If your backlog has grown beyond 50 items, you've hit the point where manual prioritization breaks down. You can't keep everything in your head, and your team wastes time debating which bug to fix next or whether this customer request matters more than that internal tool improvement. This is when what is a prioritization framework becomes essential: it gives you a repeatable system for evaluating new items as they arrive and maintaining order in your backlog.

Many teams implement frameworks after a failed sprint or release where they realize they built the wrong things. The post-mortem reveals that nobody actually understood why certain features were prioritized over others. A framework prevents this by documenting the logic behind every decision.

You notice prioritization frameworks become necessary when stakeholders start bypassing the product team to lobby executives directly. Someone's feature request got declined, so they escalate to the CEO who adds it to the roadmap without understanding the trade-offs. A transparent framework gives you objective evidence to share with leadership. You can show exactly how that executive request scores compared to your current commitments and let them decide what gets bumped, not whether their pet project gets built.

Frameworks also help during acquisition integrations, new executive hires, or organizational restructures when your decision-making authority gets challenged. You prove your roadmap isn't based on personal preference but on consistent evaluation against business goals.

Different frameworks suit different team sizes, project types, and decision-making cultures. Some methods work best when you have quantitative data to support your scoring, while others shine in fast-moving environments where you need quick categorical decisions. The framework you choose should match your team's maturity level with data analysis and the complexity of trade-offs you're evaluating.

RICE gives you a formula-based approach where you multiply Reach (number of users affected), Impact (degree of effect on each user), and Confidence (certainty in your estimates), then divide by Effort (time to build). This produces a numerical score that lets you rank features objectively. You might score a feature as reaching 5,000 users with high impact (3 out of 3), medium confidence (70%), requiring 4 weeks of effort, giving you a RICE score of 26.25.

This framework fits product teams with usage analytics and the discipline to estimate effort accurately. You need historical data to predict reach and the bandwidth to score every backlog item consistently. RICE works particularly well for SaaS products where you can measure user behavior and validate your impact assumptions after launch.

MoSCoW sorts your backlog into four categories based on criticality rather than calculating scores. Must Have items are non-negotiable for launch, Should Have features are important but not deal-breakers, Could Have items are nice additions if time permits, and Won't Have explicitly documents what you're excluding. This approach forces hard conversations about what's truly essential versus what's merely desired.

MoSCoW excels in fixed-deadline scenarios like contract deliverables or regulatory compliance projects where you need absolute clarity on minimum viable scope.

Teams working on client projects or enterprise implementations benefit most from MoSCoW because stakeholders understand categorical labels more easily than numerical rankings. It also works when your team lacks the data maturity to estimate reach and impact with confidence.

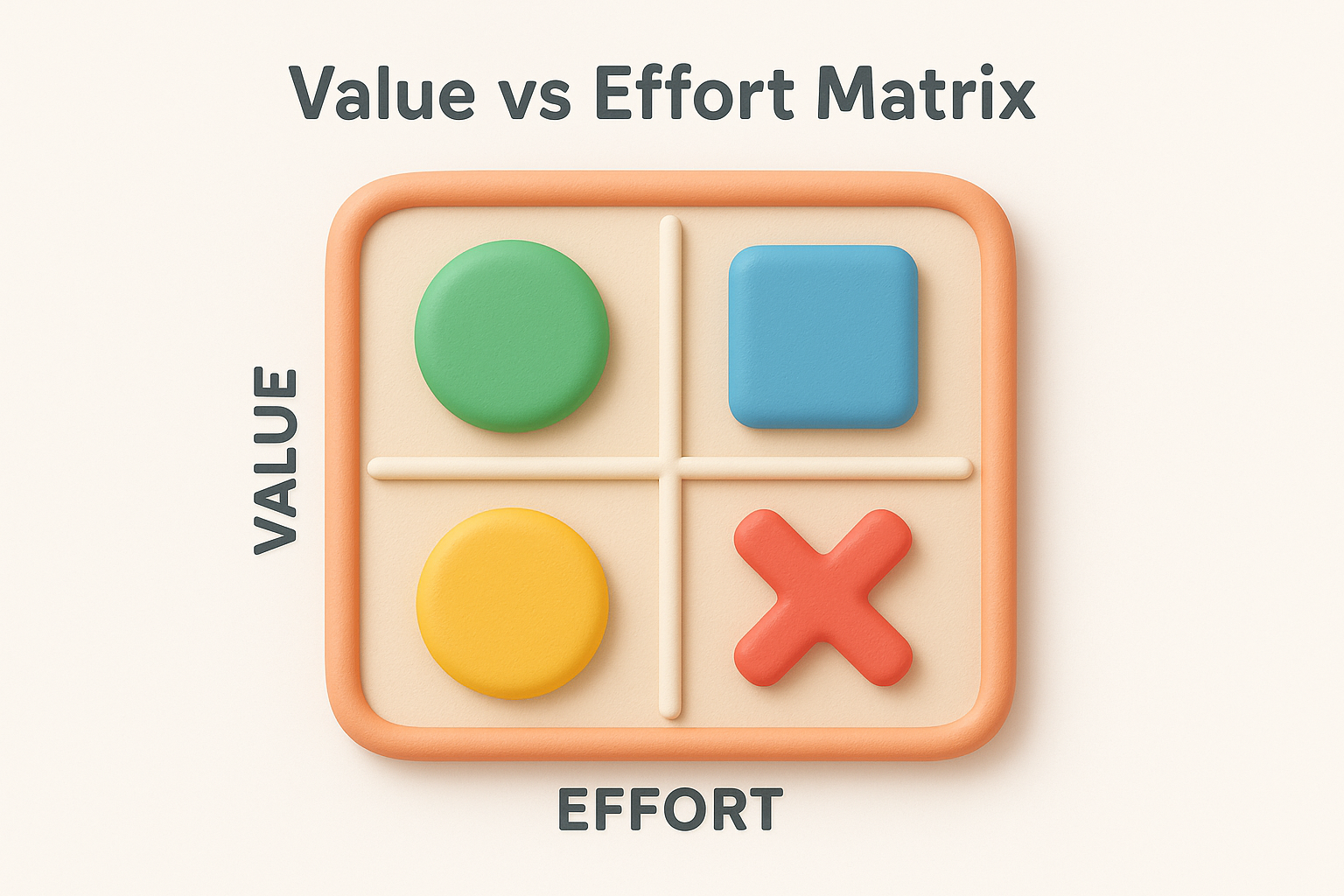

This simple two-by-two grid plots features based on their business value (high or low) and implementation effort (high or low). Quick wins land in the high-value, low-effort quadrant and get built first. Strategic bets offer high value but require significant effort. Fill-ins are easy but low-impact. Money pits consume resources without delivering meaningful value.

The matrix fits early-stage startups and teams new to structured prioritization because it's immediately intuitive. You don't need sophisticated estimation processes, just enough judgment to place items into the right quadrant. Many teams use this for initial sorting before applying more detailed frameworks like RICE to items within each quadrant.

Choosing the right prioritization method depends less on which framework sounds most sophisticated and more on your team's actual working conditions. You need to match the framework's requirements to your organization's data maturity, decision-making culture, and the complexity of trade-offs you face. A framework that works brilliantly for a 200-person product team with robust analytics will overwhelm a five-person startup still figuring out product-market fit.

Start by assessing what information you can reliably measure and estimate. RICE requires you to quantify reach, score impact on a scale, and estimate effort in time units. If you lack usage analytics or your team consistently misestimates engineering time, RICE will produce misleading scores that hurt more than they help. You need at least six months of historical data to estimate with confidence.

Teams without mature analytics should begin with simpler approaches like the Value vs Effort matrix or MoSCoW. These frameworks rely on qualitative judgment rather than precise measurements. You can implement them immediately and upgrade to more data-driven methods once you've built the instrumentation and estimation discipline.

Pick a framework you can apply consistently with your current capabilities rather than one that forces you to guess at numbers you don't understand.

Larger teams benefit from numerical scoring systems because they need objective criteria to resolve debates across departments. When you have ten stakeholders arguing about priorities, a RICE score gives you defensible logic that prevents endless discussion loops. Small teams that trust each other's judgment can move faster with categorical methods like MoSCoW that skip the calculation overhead.

Your release cadence also matters. If you ship weekly, you need a lightweight framework you can apply to dozens of small items without analysis paralysis. Monthly or quarterly releases justify more detailed scoring because each decision has bigger consequences. Understanding what is a prioritization framework means recognizing it's a tool that should accelerate your decisions, not slow them down with bureaucratic scoring exercises.

Choose frameworks based on how you need to communicate trade-offs externally. Client-facing teams working on contract deliverables find MoSCoW effective because clients immediately grasp the difference between Must Have and Should Have. Internal product teams dealing with executive stakeholders often need RICE's numerical rankings to justify why a CEO's pet feature scored lower than customer-facing improvements.

Some organizations demand transparent formulas they can audit, while others trust your product judgment and just want clear categories. Match your framework's complexity to your stakeholders' appetite for detail. You'll spend less time defending your roadmap when your prioritization method aligns with how your organization makes decisions.

Running an effective prioritization session transforms your backlog from an overwhelming list into a clear action plan. The process requires preparation, structured discussion, and decisive commitment to the results. When you understand what is a prioritization framework and how to apply it consistently, your team spends less time debating priorities and more time shipping valuable features.

Start by collecting all the items you need to prioritize before the meeting. Pull feature requests from your feedback portal, bug reports from your support queue, technical debt items from engineering, and strategic initiatives from leadership. You need everything in one place, deduplicated and briefly described, so participants understand what they're evaluating.

Identify who needs to attend based on your framework's criteria. If you're scoring business impact, invite someone from sales or marketing who understands revenue implications. For effort estimation, include technical leads who can accurately assess complexity. Keep the group small enough to make decisions quickly, typically five to eight people, but ensure you represent all necessary perspectives.

The worst prioritization sessions happen when participants see the backlog items for the first time during the meeting and waste time asking clarifying questions.

Walk through your backlog systematically, scoring each item against your framework's criteria. If you're using RICE, estimate reach based on usage data or customer segmentation, rate impact from 0.25 to 3, assign a confidence percentage, and calculate effort in person-weeks or story points. For MoSCoW, discuss which category each item belongs in based on business criticality and launch requirements.

Document your scores in a shared spreadsheet or prioritization tool where everyone can see the running calculations. This transparency prevents confusion about how final rankings emerge and lets you spot obvious errors immediately. When someone questions a score, you can revisit the specific criterion rather than rehashing the entire evaluation.

Once you've scored everything, sort your list by priority ranking and review the top items as a group. Look for results that contradict your intuition because those moments often reveal flawed assumptions in your scoring. Maybe you underestimated the effort required for a feature, or your impact rating didn't account for downstream maintenance costs.

When stakeholders disagree with rankings, ask them which specific score they want to change and why. This forces the conversation toward objective criteria rather than subjective preferences. You might decide that your initial effort estimate was too optimistic, adjust the score, and see how that affects the item's ranking.

End your session by confirming which items make your current roadmap and which stay in the backlog. Be explicit about the cutoff point based on your team's capacity. Everything above the line gets resourced, everything below waits for the next prioritization cycle.

Schedule when you'll revisit these priorities, whether that's monthly sprints or quarterly planning sessions. This prevents stakeholders from constantly lobbying to change decisions because they know exactly when they'll get another opportunity to make their case.

SaaS product teams face recurring scenarios where prioritization frameworks make the difference between strategic execution and reactive chaos. These real-world examples show how different frameworks apply to situations you encounter every sprint, from feature selection to bug triage. When you see what is a prioritization framework accomplishing in concrete situations, you gain confidence to adapt these methods to your own backlog challenges.

Your marketing team wants to revamp your free trial to improve conversion rates. They've identified eight potential improvements: simplified onboarding, guided product tours, email sequences, in-app notifications, template libraries, integration wizards, usage analytics dashboards, and collaborative features. You need to decide what makes the initial release versus what waits for iteration.

Apply MoSCoW to this scenario by categorizing based on conversion impact. Your Must Have items include simplified onboarding and email sequences because data shows 70% of trial users abandon during setup. Guided tours and templates become Should Have features that improve experience but don't prevent conversion. Integration wizards land in Could Have since only 30% of users need them immediately. This categorical approach gives your marketing team clear expectations about launch scope without getting lost in numerical calculations.

When you use MoSCoW for launch planning, stakeholders understand exactly which features are negotiable and which are locked in based on business criticality.

Your feedback portal contains 47 feature requests with varying levels of user demand. Some requests have 200 votes from free users, while others have 15 votes from enterprise customers paying $50,000 annually. You need to balance user volume against revenue impact when planning your next quarter.

RICE handles this complexity by weighting different factors. A feature requested by 200 free users might score 2,000 reach (total users) with medium impact (2), high confidence (80%), requiring 3 weeks effort, yielding a RICE score of 426. Meanwhile, an enterprise request reaching only 50 users but generating $500,000 in potential upsell might score 4,000 reach (weighted by revenue) with massive impact (3), medium confidence (60%), requiring 6 weeks effort, producing a score of 1,200. The framework reveals that the enterprise feature delivers 3x the value despite fewer raw votes.

Your engineering team estimates you're carrying six months of accumulated technical debt that slows velocity by 30%. Meanwhile, sales committed to features that close three major deals worth $300,000 combined. Leadership wants both resolved immediately with your team of four developers.

Use the Value vs Effort matrix to plot these options. Refactoring your authentication system offers high value (improves velocity permanently) but requires 8 weeks (high effort), landing in the strategic bet quadrant. Two of the sales features are quick wins offering high deal value with 1-week builds. The remaining sales feature and most technical debt items cluster in lower-value quadrants. This visual mapping helps you commit to the quick wins first, schedule the authentication refactor for next quarter, and defer the remaining items until capacity opens.

Even teams that understand what is a prioritization framework often stumble during implementation. The most common failures happen when you either make the framework too complex to use consistently or apply it so rigidly that it ignores critical context. These mistakes transform helpful structure into bureaucratic overhead that slows your team instead of accelerating decisions.

Your framework fails when scoring an item takes longer than building it. Teams often create ten-criterion matrices with weighted multipliers, confidence intervals, and risk adjustments that require spreadsheet formulas to calculate. You spend three hours in meetings debating whether a feature deserves a 7 or 8 on strategic alignment, then wonder why nobody uses the framework consistently.

Start with three to four criteria maximum and keep your scoring scales simple. RICE works because it uses four straightforward factors that you can estimate quickly. If you need more than 15 minutes to score a backlog item, your framework has become the problem rather than the solution. Test your system by having someone unfamiliar with it score five items. If they struggle or produce wildly inconsistent results, simplify your criteria until the process becomes intuitive.

The best frameworks balance precision with practicality, giving you enough structure to make consistent decisions without requiring a PhD to apply them.

Numerical scores create an illusion of objectivity that makes teams abandon their judgment entirely. You calculate that Feature A scores 85 while Feature B scores 82, then automatically prioritize A without considering that Feature B directly addresses your biggest competitor's advantage or fixes a problem causing enterprise deals to stall. The framework becomes a shield against thinking critically about strategic context.

Use your scores as input to decisions, not as the decisions themselves. Reserve space in your process to override rankings when compelling strategic reasons emerge. Document these exceptions so you understand patterns over time, but don't let your framework prevent smart trade-offs that the scoring system can't capture. Numbers inform judgment, they don't replace it.

Markets shift, business priorities change, and your framework needs to evolve accordingly. Teams often implement RICE during a growth phase where reach matters most, then continue using the same weighting when they pivot to retention and monetization. Your scoring system produces reasonable-looking numbers that drive you toward the wrong outcomes because your criteria no longer match reality.

Schedule quarterly reviews of your framework itself, not just the items you're scoring. Ask whether your criteria still align with current business goals and whether your estimates proved accurate. Maybe your effort predictions consistently underestimate by 40%, or your impact ratings don't correlate with actual usage after launch. Adjust your approach based on this feedback loop so your framework improves rather than calcifies into ritual.

You've learned what is a prioritization framework, explored different methods, and discovered how to choose one that matches your team's capabilities. The next move is implementing your chosen framework with your actual backlog rather than waiting for perfect conditions. Start with a simple method like the Value vs Effort matrix if you're new to structured prioritization, or adopt RICE if you have the data maturity to support numerical scoring.

Your framework only delivers value when you combine it with real user feedback from your product. The most effective product teams don't guess at reach and impact; they listen to their users and let that input drive their scoring decisions. Koala Feedback helps you capture feature requests, organize them by voting and comments, and turn that feedback into prioritized roadmaps your users can actually see. When you connect user voice directly to your decision-making framework, you build what actually matters instead of what sounds strategically important in planning meetings.

Run your first prioritization session this week with your current backlog. Document your scores, commit to the top three items, and schedule when you'll revisit priorities next.

Start today and have your feedback portal up and running in minutes.