Your backlog is overflowing with feature requests, bug reports, and "wouldn't it be cool if..." ideas. Your team has limited time and resources. So how do you decide what to build next? That's exactly where value vs effort prioritization comes in, a straightforward framework that plots every potential task on two axes: how much value it delivers and how much effort it requires.

The concept is simple, but applying it well takes a bit more thought. You need reliable input, actual user feedback, not gut feelings, and a consistent way to score each item. At Koala Feedback, we help product teams collect, organize, and prioritize that input so decisions like these are grounded in real user demand rather than the loudest voice in the room.

This guide breaks down the value vs effort matrix step by step. You'll learn how to set up the 2x2 grid, score features accurately, avoid common mistakes, and walk away with practical examples you can apply immediately. Whether you're a product manager at a startup or leading development at a larger org, this framework gives you a repeatable process for making smarter prioritization calls.

Most product teams don't fail because they lack ideas. They fail because they can't agree on which ideas matter most and in what order to tackle them. Without a structured approach, prioritization becomes a negotiation between whoever speaks loudest or whoever has the most seniority. That's how backlogs stall and shipping cycles slow down. Value vs effort prioritization solves this by giving your team a shared vocabulary and a visual tool to evaluate every item on the same playing field.

When everyone uses the same framework to score work, prioritization stops being a debate and starts being a decision.

Skipping a structured framework means you default to what feels urgent rather than what is actually important. Teams often prioritize based on the most recent customer complaint rather than the most common one, or they chase technically interesting work because it's more engaging to build. Both patterns produce the same result: you ship things that don't move the needle, and users who need core improvements wait longer.

The hidden cost isn't just wasted sprint cycles. It's the trust you erode with users when you keep shipping features that miss their real pain points. A clear framework gives you a defensible, repeatable reason for every prioritization call you make, which matters both internally when rallying your team and externally when communicating your roadmap to users.

One of the biggest practical benefits of this approach is that it gets cross-functional teams speaking the same language. Developers think in effort, product managers think in user impact, and executives think in business outcomes. The matrix bridges that gap by giving each stakeholder a clear role: you contribute your perspective on one axis, and the team combines those inputs into a shared, visible view of the work.

This alignment also speeds up decision-making. Instead of running long meetings to debate subjective opinions, you map items to the grid and let the visual do the heavy lifting. High-value, low-effort items rise to the top immediately. Low-value, high-effort items drop just as fast. The conversation shifts from "should we build this?" to "how do we build this well?" which is a much more productive starting point for any sprint planning session.

The framework only works as well as the inputs you feed it. If your value scores come from one person's opinion, the matrix is just one person's backlog with extra steps. You need actual user feedback to anchor your value estimates in reality. When you know that 40 users requested a specific feature and another 10 mentioned it in support tickets, you're not guessing at value, you're measuring it directly.

Real user demand also makes it easier to get stakeholder buy-in. When a developer asks why a feature ranked high, you can point to specific user votes and comments rather than a gut feeling. That's the difference between prioritization that sticks and prioritization that gets relitigated every sprint.

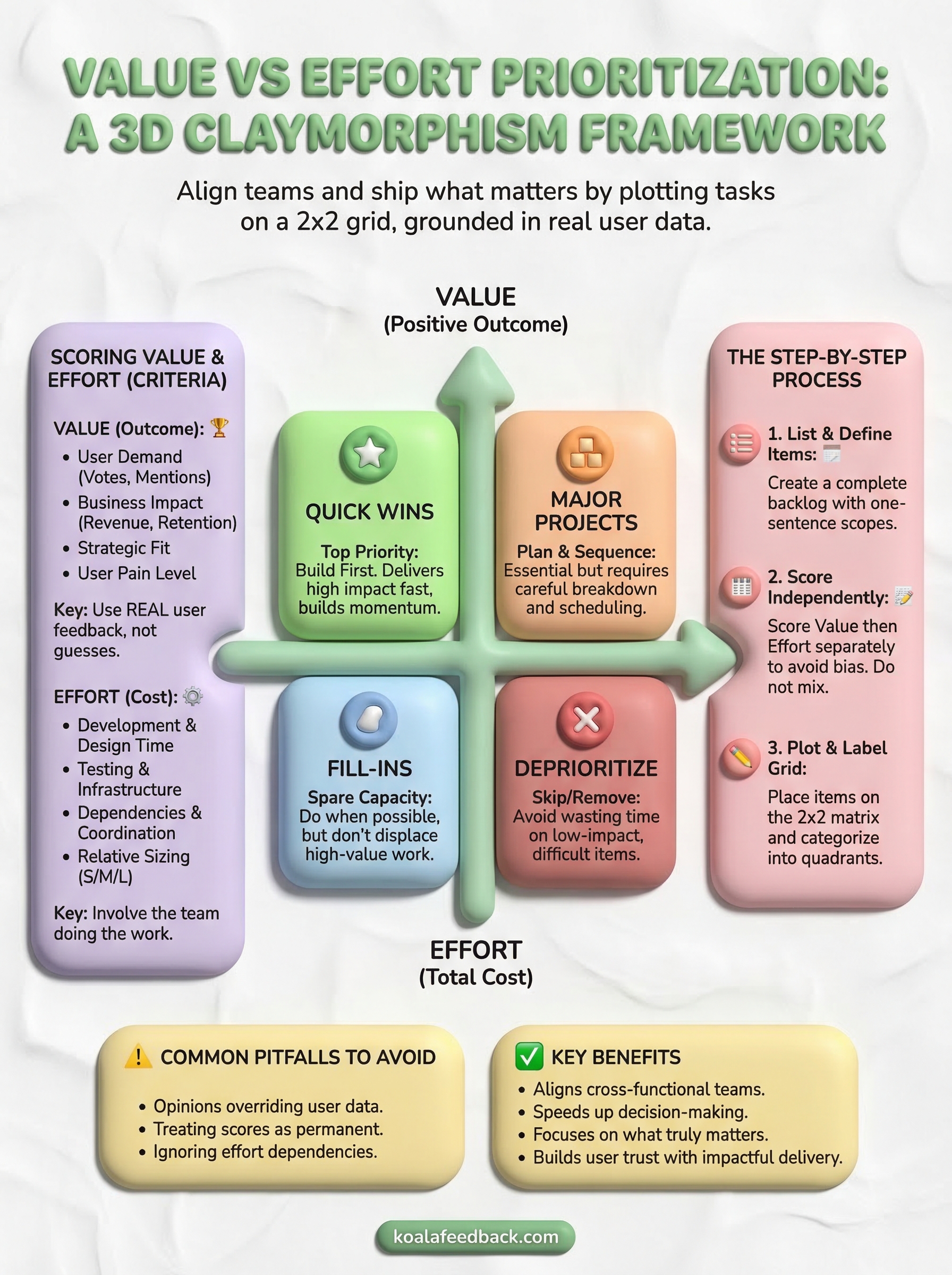

Before you can plot anything on a matrix, you need to define what "value" and "effort" actually mean for your product. Many teams skip this step and assign scores based on different assumptions, which makes the entire matrix unreliable. In value vs effort prioritization, both axes need clear, agreed-upon criteria before you score any work.

Value measures the positive outcome a feature delivers, either to your users, your business, or both. The most reliable signals come from user feedback: how many people requested this feature, how often it appeared in support conversations, and whether users described it as a blocker or a minor convenience. You can also factor in business impact measures like expected revenue increase, churn reduction, or competitive positioning.

Value scores backed by real user data are far more defensible than scores based on internal assumptions alone.

A simple way to structure your value scoring is to rate each factor on a consistent scale, then combine them:

Effort captures everything your team needs to spend to ship a feature: development time, design work, testing, infrastructure changes, and dependencies on other tasks. Underestimating effort is one of the most common mistakes teams make, so you want to involve the people actually doing the work when you assign these scores.

Rather than estimating exact hours, use a relative sizing approach. Rate each item as small, medium, or large compared to a reference task your team already completed. This keeps estimates grounded in real experience and avoids false precision. If a feature requires coordinating multiple teams or touches core architecture, factor that coordination overhead into your score as well.

Building the matrix doesn't require special software or a lengthy setup process. You need a clear list of items to evaluate, agreed-upon scoring criteria, and a simple grid. The steps below walk you through the value vs effort prioritization process in a practical, repeatable way so anyone on your team can follow the same method.

Start by pulling every candidate feature, task, or improvement into a single list. Don't filter anything out at this stage. Your goal is a complete picture of your backlog before you apply any judgment. If you use a feedback tool, this is the moment to pull in user-submitted requests alongside internal ideas so nothing gets left out by accident.

Write a one-sentence description for each item so everyone on your team evaluates the same scope when they assign scores. Ambiguity at this stage produces inconsistent scores later.

Score each item on both axes using a simple 1-to-5 scale, where 1 is low and 5 is high. Assign value scores first, drawing on your user feedback data, support ticket patterns, and business impact estimates. Then score effort separately, using direct input from the people who will actually do the work.

Score each axis independently before combining them. Mixing the two at once introduces bias and undermines the whole process.

Keep the two scoring rounds separate. When teams score value and effort simultaneously, attractive features tend to receive lower effort scores than they deserve because the team unconsciously wants to justify building them.

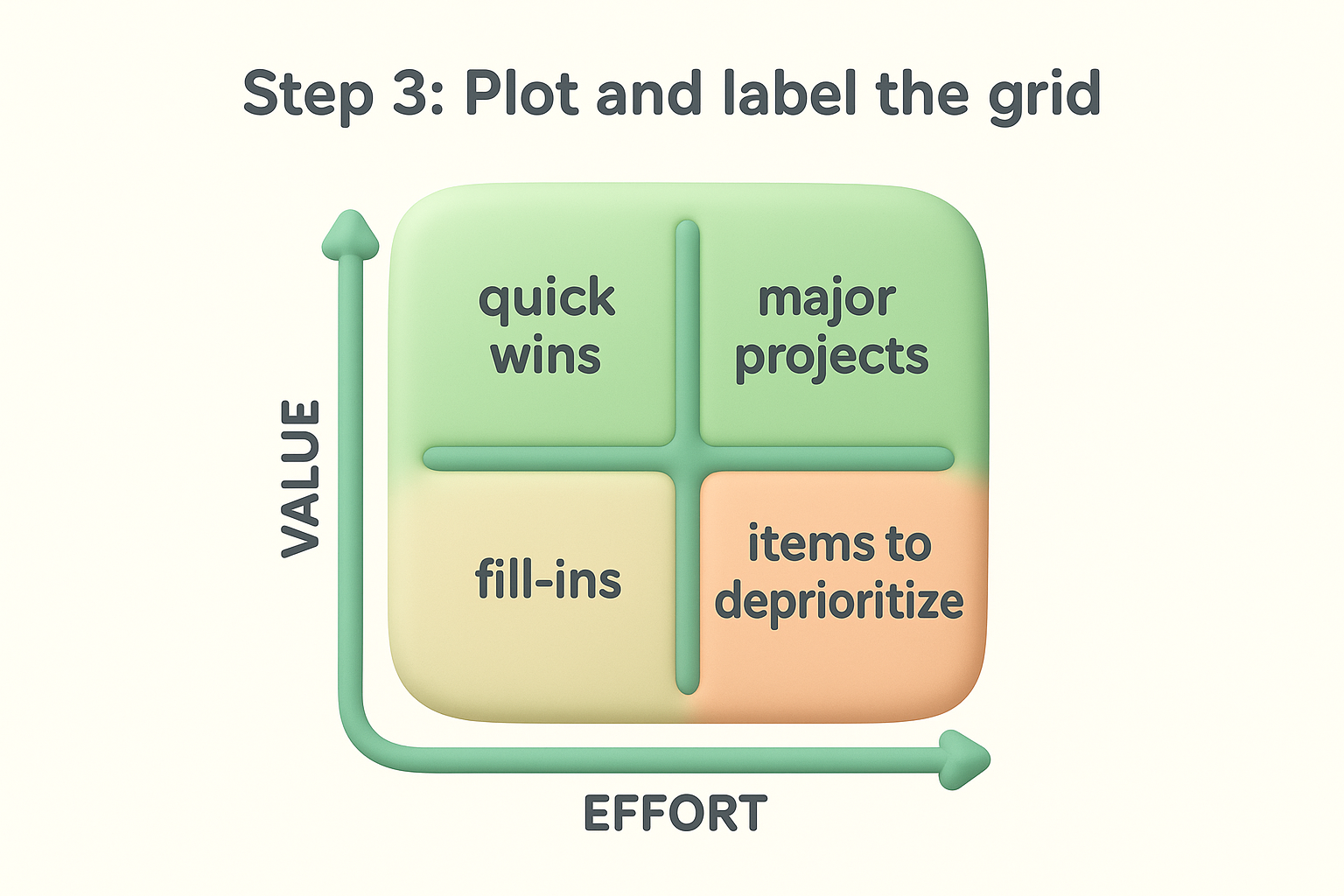

Draw a two-by-two grid with value on the vertical axis and effort on the horizontal axis. Place each item based on its combined scores. Label each quadrant clearly: quick wins (high value, low effort), major projects (high value, high effort), fill-ins (low value, low effort), and items to deprioritize (low value, high effort). This visual immediately shows where to focus your team's time.

Once every item is plotted on the grid, the hard debate about priorities is largely over. The visual layout does the reasoning for you, pushing the most important decisions to the surface without requiring another round of meetings. Your job at this stage is to read the quadrants correctly and translate them into a clear sequencing plan your team can act on.

Items landing in the high-value, low-effort quadrant should move to the top of your next sprint without much debate. These are the items that deliver meaningful user impact while consuming relatively little of your team's time. In value vs effort prioritization, quick wins are your most reliable source of early momentum because they show users progress fast and keep your team confident in the process.

Prioritizing quick wins early builds trust with your users and proves the framework is producing real results, not just a tidy-looking grid.

Don't let quick wins sit unactioned while you debate the bigger items. Ship them, document the outcome, and use the results to calibrate your future value scores.

High-value, high-effort items belong in your roadmap, but they need more preparation before they enter a sprint. Break them into smaller deliverable pieces, identify dependencies, and assign clear ownership before committing to a timeline. These items matter too much to rush and too much to ignore, so the right move is deliberate sequencing rather than either building them immediately or burying them in the backlog.

Low-value, high-effort items deserve a hard look before anyone spends time on them. Most of the time, the right call is to remove them entirely from active consideration. Low-value, low-effort items can fill spare capacity between larger priorities, but they should never displace work from the two higher-value quadrants. Protecting your team's time from low-impact work is just as important as picking the right items to build.

Even a well-built matrix produces bad outcomes if you feed it bad inputs or misread the results. The most common failures in value vs effort prioritization aren't structural problems with the framework itself; they're process problems that creep in when teams skip steps or rely on assumptions instead of actual user data.

The most damaging mistake teams make is assigning value scores based on who speaks loudest in the room rather than what users have explicitly requested. When a senior stakeholder's preference replaces a genuine signal from your user base, the matrix reflects internal politics rather than real product needs.

Ground your value scores in quantifiable signals like user votes, feedback submissions, and support ticket frequency rather than seniority or opinion.

To avoid this, separate the scoring session from any prioritization discussion. Have team members assign scores independently before sharing results. This removes social pressure and produces scores that reflect each person's honest read of the data rather than group consensus shaped by whoever spoke first.

Many teams score their backlog once and then treat those scores as fixed for months. Markets shift, users evolve, and what looked high-value six months ago may no longer align with your current direction. Stale scores produce a stale roadmap, and you end up defending outdated priorities without realizing the underlying assumptions have changed.

Schedule a regular review, quarterly at minimum, to revisit scores on any item that hasn't shipped. Check whether the user feedback supporting that value score is still current, and re-estimate effort if your team's codebase or capacity has changed since the last assessment.

A feature might score low on effort in isolation, but if it depends on completing another large task first, the true effort is much higher than the score suggests. Always map dependencies before finalizing your grid. If an item's completion requires prior work from another team or a platform change, factor that sequencing cost into the effort score so your matrix reflects reality rather than a simplified version of it.

Value vs effort prioritization gives your team a structured, repeatable way to decide what to build next without turning every backlog review into a debate. The framework works when you score both axes using real user feedback, keep value and effort scoring separate, and revisit your matrix regularly so stale assumptions don't drive your roadmap.

Your quick wins deliver the fastest return, but your major projects define your long-term product direction. Both deserve a place on your roadmap, just at different stages. The items you choose to skip matter just as much as the ones you choose to build because protecting your team's time from low-impact work is part of what makes this process effective.

If you want your value scores grounded in actual user demand rather than guesswork, start collecting and organizing feedback before your next prioritization session. Koala Feedback helps you centralize user requests, track votes, and build a roadmap your whole team can stand behind.

Start today and have your feedback portal up and running in minutes.