Your feedback portal is overflowing with feature requests. Users want integrations, redesigns, new workflows, and that one highly specific function only three people asked for. Without a clear system to evaluate what deserves your team's time, you're stuck guessing, or worse, building features that don't move the needle. That's where roadmap prioritization techniques come in.

These frameworks give product teams a structured way to weigh competing demands, allocate limited resources, and make decisions they can defend to stakeholders. Whether you're drowning in requests or just want to stop relying on gut feelings, the right prioritization method helps you focus on what actually matters to your users and your business.

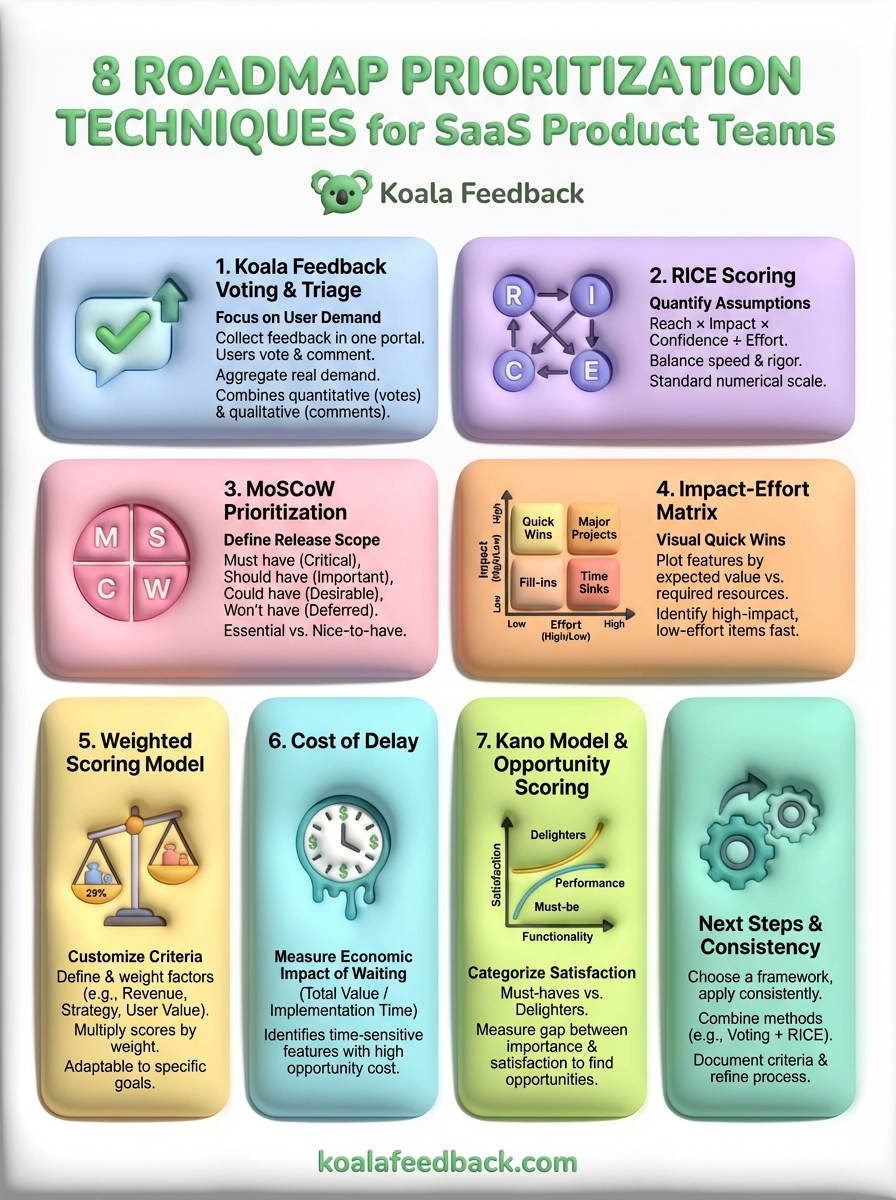

At Koala Feedback, we help SaaS teams collect, organize, and prioritize user feedback in one place. But gathering feedback is only half the battle, knowing what to build next requires a repeatable process. This article breaks down eight proven prioritization techniques, from RICE scoring to MoSCoW, so you can pick the framework that fits your team's workflow and start shipping features with confidence.

Before you dive into complex scoring models, start with the most direct signal available: what your users actually want. Koala Feedback voting and feedback triage lets you prioritize roadmap items based on real demand from your user base. This approach collects feedback in one central portal, lets users vote on feature requests, and surfaces the highest-priority items through simple aggregation. Instead of guessing what matters, you let your customers tell you.

Users submit feature requests through your feedback portal, which automatically deduplicates similar ideas and organizes them into categories. Each request includes a voting mechanism where other users can upvote the features they need most. Your product team reviews the feedback regularly, categorizes it by product area or theme, and uses vote counts as one factor in deciding what to build next. You can also track comments to understand the context behind each request, which often reveals nuances that voting alone misses.

The beauty of this method is that it combines quantitative data (votes) with qualitative insights (comments) to give you a fuller picture of user needs.

You need a functioning feedback portal where users can submit and vote on ideas. Your team also needs the discipline to review submissions regularly and tag or categorize them for easier analysis. If you're using Koala Feedback, this process is built in. Beyond the portal itself, you need clarity on which customer segments matter most, since a feature with 50 votes from enterprise customers might outweigh one with 200 votes from free users.

This technique works best for SaaS teams building for a defined user base with active engagement. If your customers regularly share feedback and you want to involve them in the product development process, voting creates transparency and builds trust. It's particularly effective when combined with other roadmap prioritization techniques, since you can use vote counts as one input in more complex scoring models.

Don't treat votes as the only deciding factor. A feature with 500 votes might still be a bad fit for your product strategy or too expensive to build. You also need to watch for voting bias, where power users or vocal minorities dominate the conversation while quieter segments go unheard. Balance voting data with business goals, technical feasibility, and strategic alignment. Finally, close the loop by updating users on feature status so they know their feedback matters.

RICE scoring gives you a structured framework to evaluate features using four quantifiable factors: Reach, Impact, Confidence, and Effort. Developed by Intercom, this method lets you rank competing initiatives on the same numerical scale, making it easier to compare wildly different feature requests. Instead of arguing about subjective priorities, your team can plug in the numbers and let the formula surface what deserves attention first.

You calculate a RICE score by multiplying Reach (how many users will this affect per time period), Impact (how much it will improve their experience on a scale of 0.25 to 3), and Confidence (how sure you are about your estimates, expressed as a percentage). Then you divide that total by Effort (person-months required to build it). The resulting formula is: (Reach × Impact × Confidence) ÷ Effort. Features with higher RICE scores rank higher on your roadmap.

The beauty of RICE is that it forces you to quantify assumptions instead of relying on vague claims like "this feature is really important."

You need usage data to estimate how many users a feature will reach, which means access to analytics or customer segmentation information. Your team also needs to estimate impact based on user research or historical performance of similar features. Confidence requires honest self-assessment about how much uncertainty exists in your estimates, and effort demands input from engineering on realistic build times.

RICE works well for product teams that want a balance between speed and rigor. If you're comparing features across different product areas and need a single metric to sort your backlog, this method delivers clarity without requiring extensive analysis for each item. It's especially useful when stakeholders demand transparency about why certain features made the cut.

Don't let the precision of RICE scoring fool you into thinking it's perfectly objective. Your estimates for reach and impact are still subjective, and small changes in those numbers can dramatically shift priorities. Avoid gaming the system by inflating scores for pet projects, and revisit your assumptions regularly as you learn more about user behavior. Finally, remember that RICE is one of many roadmap prioritization techniques, not the only factor in your decision.

MoSCoW prioritization divides your backlog into four distinct categories based on relative importance: Must have, Should have, Could have, and Won't have (this time). This technique forces you to draw clear lines between essential features and nice-to-haves, which is especially valuable when you're working with fixed deadlines or limited resources. Instead of trying to build everything at once, you identify the minimum viable set of features needed to ship a successful release.

You categorize each feature request into one of four buckets. Must haves are non-negotiable features that make or break your release. Without them, your product fails to deliver core value. Should haves are important but not critical; you can delay them to the next cycle if needed. Could haves are desirable improvements that enhance the experience but aren't essential. Won't haves are explicitly out of scope for this planning period, though they might resurface later.

The power of MoSCoW lies in forcing honest conversations about what's truly essential versus what's just nice to have.

Your team needs consensus on release goals and what success looks like for your current planning cycle. You also need input from stakeholders across product, engineering, and business teams to ensure the categories reflect both technical constraints and strategic priorities. Customer feedback helps inform which features belong in each bucket, especially when deciding between should haves and could haves.

This method works well for teams planning releases with hard deadlines or preparing for major launches where scope needs clear boundaries. If you're building the first version of a feature set or planning a seasonal release, MoSCoW gives you a simple framework to communicate what's in and what's out. It complements other roadmap prioritization techniques by adding clarity to your commitment level for each item.

Don't let your must have category balloon into a wish list. If more than 60% of your backlog lands in must haves, you haven't made real choices. Revisit your categorization regularly as priorities shift, and be prepared to move items between buckets when reality changes your assumptions. Finally, communicate won't haves clearly to stakeholders so they understand what's deferred rather than forgotten.

The impact-effort matrix maps features onto a two-by-two grid based on expected value versus required resources. This visual framework lets your team quickly identify quick wins (high impact, low effort), major projects (high impact, high effort), fill-ins (low impact, low effort), and time sinks (low impact, high effort). By plotting initiatives on this grid, you can prioritize features that deliver maximum value with minimum investment.

You evaluate each feature on two dimensions: the business impact it will create and the engineering effort required to ship it. Impact considers factors like revenue potential, user satisfaction, and strategic alignment. Effort accounts for development time, technical complexity, and resource availability. You plot each feature on the grid and focus first on high-impact, low-effort items before tackling more resource-intensive work.

This matrix transforms abstract prioritization discussions into a visual tool that everyone on your team can understand at a glance.

Your team needs rough estimates of effort from engineering and clear criteria for measuring impact from product and business stakeholders. Unlike RICE scoring, you don't need precise numbers since the matrix uses relative positioning. However, you do need enough context about each feature to place it accurately relative to other initiatives on your roadmap.

This approach works best for teams that need fast decision-making without extensive analysis. If you're sorting through a large backlog and want to identify obvious priorities quickly, the impact-effort matrix delivers clarity. It's particularly effective during planning sessions when comparing features from different roadmap prioritization techniques.

Avoid placing too many features in the high-impact, low-effort quadrant since that typically signals wishful thinking. Challenge your assumptions about what qualifies as low effort, and revisit your matrix regularly as you learn more about technical complexity. Don't ignore high-impact, high-effort features entirely since they often represent your most valuable long-term bets.

A weighted scoring model lets you customize prioritization around the criteria that matter most to your specific product strategy. Instead of using a preset formula like RICE, you define your own evaluation factors (such as revenue potential, strategic alignment, and user satisfaction) and assign each one a weight based on business importance. This flexibility makes weighted scoring one of the most adaptable roadmap prioritization techniques since you control exactly what drives your decisions.

You start by identifying the criteria that matter for your roadmap, typically three to seven factors like customer value, alignment with company goals, technical feasibility, and revenue impact. Next, you assign each criterion a percentage weight that reflects its relative importance, ensuring all weights add up to 100%. Then you score each feature on a scale (often 1-10) for every criterion, multiply each score by its weight, and sum the results to get a total weighted score for that feature.

Your team needs agreement on which criteria matter and how much weight each one deserves. This requires alignment between product, engineering, and business stakeholders since different teams often prioritize different factors. You also need a consistent scoring scale and clear definitions for what each score means so everyone evaluates features using the same standards.

Weighted scoring works well for teams with complex decision-making processes involving multiple stakeholders and competing priorities. If your product serves diverse customer segments or balances conflicting goals like short-term revenue and long-term strategic positioning, this method accommodates those nuances better than simpler frameworks.

The flexibility of weighted scoring means you can adjust your criteria as your strategy evolves without abandoning the entire framework.

Don't overcomplicate your model with too many criteria since that dilutes the impact of each factor and makes scoring tedious. Revisit your weights periodically to ensure they still reflect current business priorities, and resist the temptation to adjust weights retroactively to favor specific features.

Cost of delay measures the economic impact of postponing a feature by calculating how much value you lose with each passing week or month. This approach shifts your focus from simply building what's important to understanding when timing matters most. By quantifying the financial or strategic cost of waiting, you can identify features where delays create the biggest opportunity cost and prioritize accordingly.

You estimate the total value a feature will deliver (revenue increase, cost savings, or strategic benefit) and divide it by the implementation time required to build it. This calculation produces a cost-of-delay divided by duration (CD3) score that tells you which features deliver the most value per unit of time invested. Features with higher CD3 scores move to the top of your roadmap since they generate returns faster.

This method forces you to think beyond whether a feature is valuable and consider whether delaying it creates a meaningful business risk.

Your team needs financial projections or strategic value estimates for each feature, which typically requires input from sales, customer success, or business analytics. You also need realistic delivery timelines from engineering to calculate how long implementation will take. Without both pieces of data, you can't accurately compare the opportunity cost of different features.

Cost of delay works well for teams making trade-offs between revenue-generating initiatives or when timing directly impacts business outcomes. If you're choosing between features that all matter but need to sequence them optimally, this method reveals which ones lose value fastest if you wait. It complements other roadmap prioritization techniques by adding a time-sensitive dimension to your decision-making.

Don't rely entirely on projected revenue since your estimates often prove optimistic once features ship. Consider non-financial costs like competitive positioning or customer churn risk when calculating delay impact. Revisit your calculations regularly as market conditions change and certain features become more or less time-sensitive based on external factors.

The Kano model categorizes features based on how they affect customer satisfaction, separating must-haves from delighters. Instead of treating all features as equally important, this framework recognizes that some capabilities create disproportionate satisfaction when present while others cause frustration when missing. Opportunity scoring extends the Kano model by measuring the gap between importance and satisfaction to identify where improvements deliver the biggest impact.

You survey users about each feature twice: once asking how important it is and again asking how satisfied they are with the current implementation. The Kano model then classifies features into categories like basic expectations (must-haves that users assume exist), performance features (more is better), and delighters (unexpected capabilities that create excitement). Opportunity scoring calculates the difference between importance and satisfaction ratings to reveal where you're underdelivering relative to user expectations.

This dual-lens approach helps you avoid building features that sound important but won't actually move the satisfaction needle.

Your team needs direct user feedback through surveys asking about importance and satisfaction for each feature under consideration. You need a representative sample of your user base to ensure the results reflect actual customer priorities rather than vocal minorities. The survey design matters since poorly worded questions produce unreliable data that leads roadmap prioritization techniques astray.

This method works well for established products where you're deciding between enhancements rather than building from scratch. If you want to understand which features create competitive differentiation versus table stakes, Kano analysis reveals those distinctions clearly.

Don't skip the survey step since guessing at user satisfaction rarely matches reality. Remember that Kano categories shift over time as table stakes evolve, so resurvey periodically to catch changing expectations.

You now have eight roadmap prioritization techniques to choose from, each with distinct strengths depending on your team's workflow and decision-making style. Start by picking one framework that matches your current needs rather than trying to implement all of them at once. Most teams combine multiple methods, using voting data from users alongside scoring models like RICE or weighted scoring to balance quantitative metrics with strategic judgment.

The key to effective prioritization is consistency. Choose your approach, document your criteria clearly, and apply the same framework across your entire backlog so stakeholders understand why certain features made the cut. Track how your decisions perform after launch so you can refine your process over time.

If you're ready to implement user voting and feedback triage in your prioritization process, start collecting feedback with Koala Feedback. You'll centralize feature requests, let users vote on priorities, and surface the insights that inform smarter roadmap decisions.

Start today and have your feedback portal up and running in minutes.