Feature requests pile up fast. Your users want better reporting, a mobile app, integrations with their favorite tools, and that one obscure feature they swear will change everything. Without a clear system, deciding what to build next becomes a guessing game, or worse, a popularity contest driven by whoever speaks loudest.

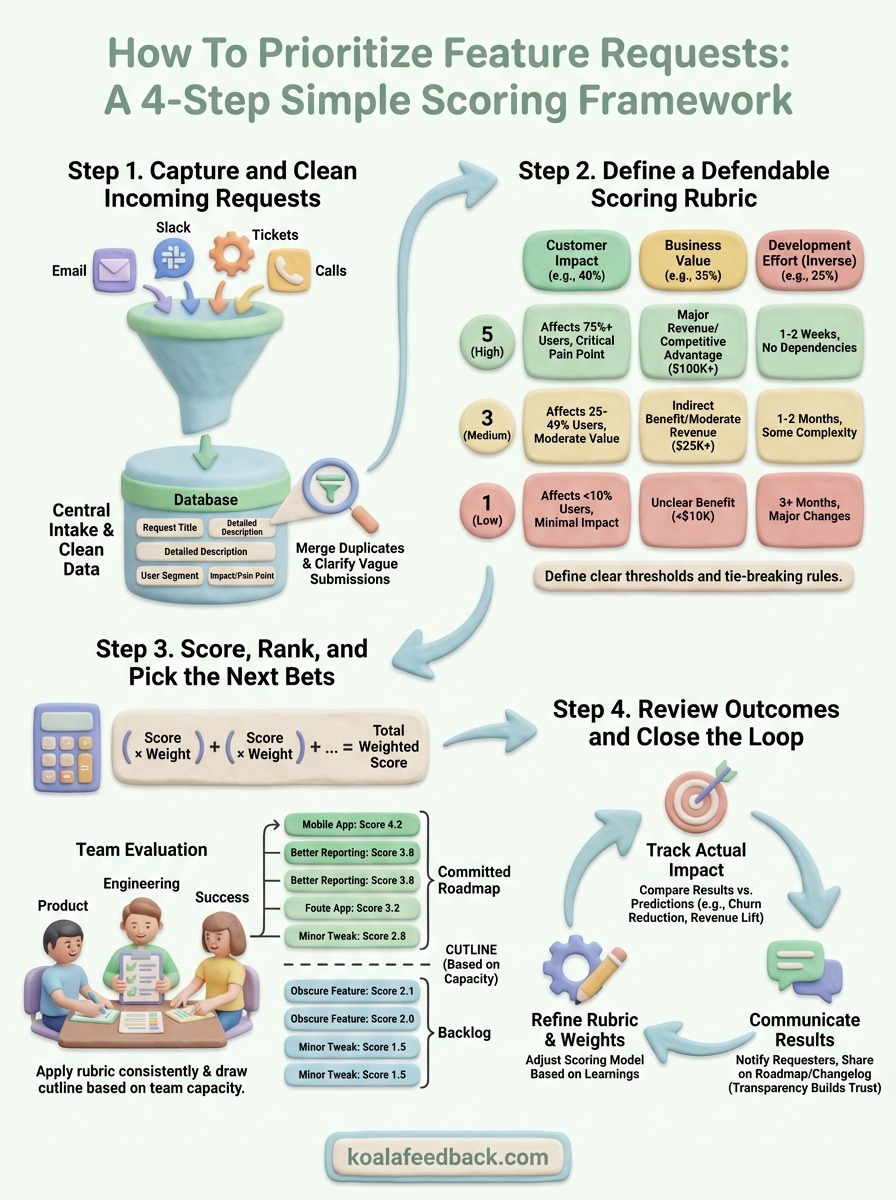

Learning how to prioritize feature requests using a structured scoring approach removes the guesswork. Instead of debating opinions in meetings, you evaluate each request against consistent criteria: business impact, customer value, and development effort.

This guide walks you through practical scoring methods you can implement today. At Koala Feedback, we've built our platform around helping teams collect, organize, and prioritize user feedback, so we've seen firsthand what separates teams that ship the right features from those stuck in endless backlogs. By the end, you'll have a repeatable framework to rank requests objectively and build what actually matters.

A simple scoring system doesn't mean simplistic. Your framework needs to be straightforward enough that your entire team understands it, yet robust enough to handle real tradeoffs. The goal is to create a repeatable process that replaces gut feelings with defendable logic when you need to decide how to prioritize feature requests.

Your scoring model should balance three core dimensions: what the customer gains, what the business wins, and what the team must invest. Too many criteria create analysis paralysis. Too few leave you guessing about critical factors. The sweet spot typically lives between three to five weighted factors that reflect your company's current priorities.

Simple scoring works because it forces you to articulate what actually matters before emotions and politics enter the room.

Start with customer impact: how many users will benefit, and how significantly will their experience improve? A feature that solves a critical problem for 80% of your user base scores higher than a nice-to-have that affects 5%. You're measuring both reach and depth of value.

Next, evaluate business value: does this request support revenue growth, reduce churn, unlock a new market segment, or strengthen your competitive position? Quantify this wherever possible. A feature that could prevent $50,000 in annual churn deserves different consideration than one that might attract a handful of new signups.

Finally, assess development effort: what's the realistic cost in engineering time, design resources, and technical complexity? Break this into t-shirt sizes (small, medium, large) or story points if your team uses them. Implementation risk matters too. A feature requiring major infrastructure changes carries hidden costs beyond the initial build.

Your scoring criteria need different weights based on your company's stage and goals. A startup chasing product-market fit might weight customer impact at 50%, business value at 30%, and effort at 20%. An established company focused on efficiency might flip those ratios.

Create a scoring rubric where each criterion uses the same scale, typically 1 to 5 or 1 to 10. Multiply each score by its weight, then sum the results. Here's a practical example:

| Criterion | Weight | Score (1-5) | Weighted Score |

|---|---|---|---|

| Customer Impact | 40% | 4 | 1.6 |

| Business Value | 35% | 5 | 1.75 |

| Development Effort (inverse) | 25% | 2 | 0.5 |

| Total | 3.85 |

Test your weights on five known requests where you already have strong opinions about priority. If the scores don't match your instincts, adjust the weights until they do.

Before you can learn how to prioritize feature requests, you need clean, organized data to work with. Messy inputs create messy outputs. When requests arrive scattered across support tickets, Slack messages, sales calls, and random emails, you spend more time hunting for context than actually evaluating merit.

Create one dedicated place where all feature requests land. This could be a feedback portal, a shared form, or a designated tool that your team checks daily. The key is making submission easy for users while capturing the information you need to evaluate each request.

Your intake form should collect these essential fields at minimum:

A standardized intake process eliminates the need to chase down basic context later when you're trying to score requests.

Raw requests arrive with varying levels of clarity. Someone writes "better analytics" while another describes the exact dashboard widget they need. Your job is to merge duplicates and clarify ambiguous submissions before they enter your scoring process.

Group similar requests together even if the wording differs. Five users asking for "email notifications," "alerts," and "push updates" are probably describing the same underlying need. Combine them into one consolidated request with a note about demand volume.

Reach out to clarify requests that lack critical details. A two-minute conversation with the requester saves hours of guesswork during evaluation. Ask: "Can you walk me through exactly when you'd use this?" and "What's the specific outcome you need?"

Your scoring system only works if everyone understands how you arrived at the numbers. A rubric you can't explain becomes a black box that kills trust. When someone challenges why their request scored lower than another, you need objective criteria to point to, not vague hunches about what feels right.

Create a simple table that defines exactly what each score means for every criterion. Your team should be able to look at a request and assign scores without second-guessing the definitions. Here's a practical template showing how to prioritize feature requests using clear scoring levels:

| Score | Customer Impact | Business Value | Effort (inverse) |

|---|---|---|---|

| 5 | Affects 75%+ users, critical pain point | $100K+ revenue impact or major competitive advantage | 1-2 weeks, no dependencies |

| 4 | Affects 50-74% users, significant improvement | $50K-$99K revenue impact or clear market differentiation | 3-4 weeks, minor dependencies |

| 3 | Affects 25-49% users, moderate value | $25K-$49K revenue impact or indirect business benefit | 1-2 months, some technical complexity |

| 2 | Affects 10-24% users, nice to have | $10K-$24K revenue impact or speculative benefit | 2-3 months, significant complexity |

| 1 | Affects <10% users, minimal impact | <$10K revenue impact or unclear benefit | 3+ months, major architectural changes |

Define what specific evidence justifies each score. For customer impact, do you count paying customers differently than trial users? For business value, what data sources validate your estimates? For effort, which team members provide the final assessment?

The best rubrics include real examples from past features that scored at each level, giving your team reference points.

Document edge cases and tie-breaking rules upfront. When two requests score identically, does the one with more customer votes win? Does strategic alignment override pure scores? Writing these decisions down prevents endless debates when you're trying to finalize your roadmap.

Now you apply your scoring rubric to every cleaned request in your backlog. This step transforms subjective opinions into comparable numbers that reveal which features deserve immediate attention versus which can wait. The key is maintaining consistency across all evaluations so your final rankings actually mean something.

Gather your core evaluation team (typically product, engineering lead, and someone from customer success) to score requests together. Working as a group prevents individual bias from skewing results. Each person scores independently first, then you discuss discrepancies where scores differ by more than one point.

Walk through each request and assign scores for every criterion using your defined rubric:

Scoring as a team surfaces hidden assumptions and ensures everyone understands why certain features rank higher than others.

Sort your entire backlog by final weighted score in descending order. Your highest-scoring requests rise to the top. Now comes the practical part: deciding where to draw the line based on your team's actual capacity for the next quarter or sprint cycle.

Look at your available resources and mark the cutline where cumulative effort estimates match your capacity. Features above the line become your committed roadmap. Those below remain in the backlog for future consideration. This is how to prioritize feature requests in a way that's both data-driven and realistic about what you can actually deliver.

Scoring and shipping features is only half the equation when learning how to prioritize feature requests effectively. The real value comes from tracking whether your high-scoring bets actually delivered the predicted impact. Without measuring results, you'll repeat the same prioritization mistakes quarter after quarter, building features that look good on paper but fall flat in practice.

Set specific success metrics for each feature before you start building. If you scored a feature high for customer impact because it would reduce churn, define what measurable reduction would prove you were right. If business value drove the priority, identify the revenue or conversion lift you expect to see within 30, 60, or 90 days after launch.

Compare your actual results to your scoring predictions three months post-launch. Did that feature you rated 5 for customer impact really move the needle? If your top-scoring requests consistently underperform while lower-ranked features surprise you with outsized value, your scoring weights need adjustment.

Treating your prioritization framework as a hypothesis you test with each release turns roadmap planning from guesswork into learning.

Close the loop with every user who submitted or voted on a shipped feature. Send them a direct message explaining that you built their request and invite them to try it. This simple act transforms feature releases from announcements into conversations that strengthen customer relationships.

Share what you learned from outcomes publicly on your roadmap or changelog. When a feature performs differently than expected, explain why and what you'll adjust next time. Transparency about both wins and misses builds trust and encourages better quality feedback in future submissions.

You now have a complete framework for how to prioritize feature requests using defendable scoring methods. The system works because it replaces opinion battles with objective criteria everyone can understand and reference when making roadmap decisions.

Start implementing this scoring approach with your next batch of incoming requests. Build your rubric, assign weights that match your current business priorities, and score your top 20 backlog items as a team exercise. The first round feels awkward, but by the third or fourth session, evaluation conversations become faster and more focused.

Centralized feedback collection makes this entire process smoother. Instead of chasing down scattered requests and missing valuable context, you need everything flowing into one organized system. Koala Feedback gives you the tools to capture user requests, apply your scoring methodology, and communicate roadmap decisions back to the people who submitted them. Start your free trial today and turn feature request chaos into strategic clarity.

Start today and have your feedback portal up and running in minutes.