Every product decision you make without talking to your users is essentially a guess. Some guesses work out. Most don't. A solid user research plan is what separates teams that build features people actually want from teams that ship into the void and hope for the best.

But here's the thing, research without structure leads to messy data, conflicting takeaways, and wasted time. You need a clear framework that defines who you're talking to, what you're trying to learn, and how you'll act on what you find. That's exactly what a user research plan gives you: a repeatable process for turning curiosity into product clarity.

At Koala Feedback, we help teams collect, organize, and prioritize user feedback every day. We've seen firsthand how the best product teams pair ongoing feedback collection with intentional research efforts, and how that combination drives better decisions. This guide walks you through how to create a user research plan step by step, complete with practical examples, key questions to ask, and a template you can start using right away.

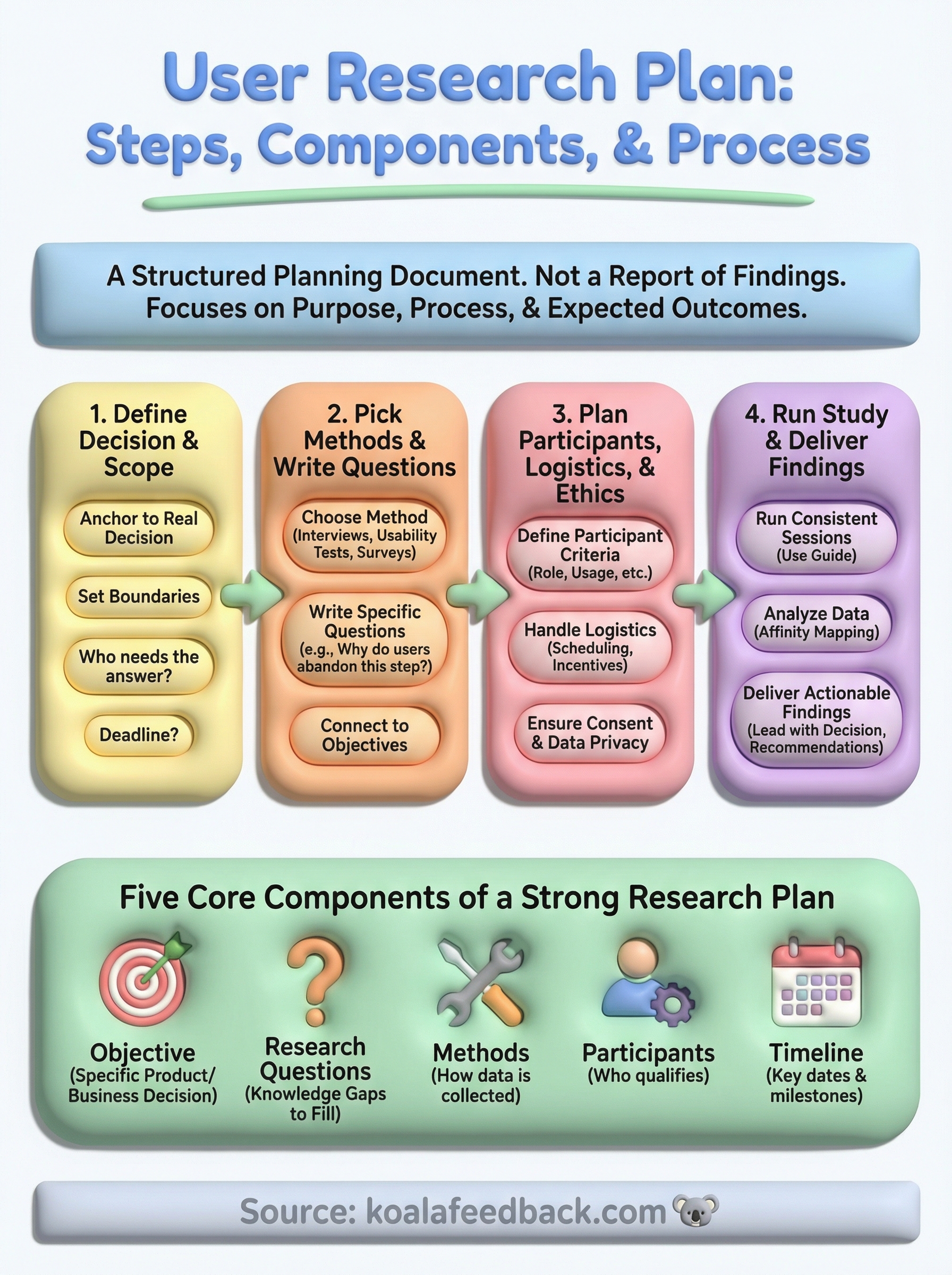

A user research plan is a structured document that defines the purpose, process, and expected outcomes of a research study before you start collecting any data. Think of it as the blueprint for your research project. It spells out what you're studying, how you'll study it, and what you'll do with the results. Without it, even well-intentioned research can drift, producing data that's interesting but doesn't actually drive decisions.

A research plan is not a report of findings. It's a planning document you create before the work begins, covering everything from the research questions you want answered to the participants you need and the methods you'll use to reach them. At its core, it's a commitment between you and your stakeholders: this is what we're going to learn, this is how we're going to learn it, and this is why it matters to the product.

Most research plans include five core components: the research objective, the questions being asked, the methods being used, the participant criteria, and the timeline. Some teams also add a section on how findings will be shared and actioned. The exact format depends on your team's size and the scope of the study, but the underlying goal is always the same: give your research direction before you spend time and money running it.

When teams skip the planning phase, they often end up running research that answers the wrong questions. You might conduct ten user interviews only to realize halfway through that you were focused on the wrong workflow. Or you might gather a pile of survey responses with no clear analysis path because the questions weren't designed with a specific decision in mind.

A research plan doesn't slow you down. It prevents you from running fast in the wrong direction.

The cost of unplanned research isn't just time. It's the erosion of stakeholder trust in your research process. When decision-makers see studies that don't produce actionable insights, they stop funding them. A clear plan signals that your research is purposeful, rigorous, and worth the investment before a single interview is scheduled.

Not every conversation with a user requires a formal research plan. A quick five-minute call to clarify a support ticket doesn't need a planning document. But if you're trying to answer a question that will influence a significant product or business decision, a formal plan is worth the effort.

Common situations where a user research plan is essential include:

The more consequential the decision, the more important it becomes to approach your research with structure. A strong user research plan gives your team alignment upfront, which means fewer debates after the data comes in and faster action on what you learn.

Before you run a single interview or send out a survey, you need to know what goes inside a user research plan. Every plan looks slightly different depending on the scope of the study, but six core elements show up in every effective research effort regardless of team size or product stage. Understanding these components upfront helps you write a plan that actually guides your work rather than collecting dust in a shared folder.

The research objective is the single most important element in your plan. It answers the question: why are you doing this study at all? A strong objective is tied to a specific product or business decision, not a general desire to "understand users better." For example, "Determine whether new users can complete onboarding without support assistance" is a focused objective. "Learn about the user experience" is not.

Your objective should be specific enough that anyone on your team could read it and immediately understand what decision this research will inform.

Research questions are not the same as the interview or survey questions you will ask participants. They are the knowledge gaps you need to fill to meet your objective. A plan to evaluate onboarding might include research questions like: Where do users get stuck? What information do they expect to see first? Keeping your list to three to five questions keeps the study focused and prevents scope creep before it starts.

The remaining four elements work together to define how the research will run in practice. Your chosen method, whether that is interviews, usability tests, or a survey, should match the type of questions you are asking. Your participant criteria should specify exactly who qualifies, including things like job role, product usage level, or time since sign-up. The table below shows how these elements connect:

| Element | What it defines |

|---|---|

| Method | How you will collect data |

| Participants | Who qualifies and how many |

| Timeline | Key dates from recruiting to readout |

| Deliverables | What you will produce and who receives it |

Locking down these four practical elements before you start protects you from mid-study confusion and keeps your stakeholders clear on what to expect and when.

Every strong user research plan starts in the same place: a real decision your team needs to make. Before you think about methods or participants, you need to get crystal clear on what the research is meant to unlock. This step takes less than an hour but saves you days of misdirected effort down the line.

The most common mistake teams make at this stage is framing their research around a topic instead of a decision. "We want to understand how users feel about onboarding" is a topic. "We need to decide whether to redesign the onboarding flow before our Q3 launch" is a decision. The difference matters because a decision has a deadline, a stakeholder who needs the answer, and a clear action that follows from the findings.

A good research objective should make the "so what" obvious before you run a single session.

Start by writing one sentence that completes this prompt: "We are running this research to help us decide whether to..." If you cannot finish that sentence concisely, your scope is too broad. Use the template below to lock it in:

| Field | Example |

|---|---|

| Decision to be made | Redesign the onboarding flow or keep the current version |

| Who needs the answer | Head of Product, Engineering Lead |

| Deadline for the decision | Before sprint planning on May 12 |

| What happens if we don't research | We guess and risk a 6-week build that misses the mark |

Once you have a decision, define what is inside and outside the scope of this study. Scope boundaries prevent your research from expanding mid-study to cover every question your team has ever had about users. If your decision is about onboarding, your scope should cover the sign-up flow through the first successful action. Billing workflows, advanced settings, and feature discovery belong in a separate study.

Write two short lists before you move forward: what this study will cover, and what it will not. Share both with your stakeholders. Getting alignment on what you are not researching is just as valuable as agreeing on what you are, because it removes the temptation to widen scope the moment someone asks "can we also figure out why users churn?"

Once you have a defined decision and clear scope, the next step in your user research plan is choosing how you will collect data and what you will specifically ask. These two choices shape everything else: your timeline, your recruiting needs, and the quality of insights you walk away with. Getting both right before you start recruiting saves you from redesigning your study halfway through.

Your method should match the type of question you are trying to answer. Exploratory questions like "why do users abandon this step?" call for qualitative methods such as moderated interviews or diary studies. Evaluative questions like "can users complete this task without errors?" point toward usability testing. When you need to measure something at scale, surveys give you the breadth that interviews cannot.

Pick the method that fits your question, not the method your team is most comfortable running.

Use this table to match your question type to the right approach:

| Question type | Best method | What you get |

|---|---|---|

| Why do users behave a certain way? | Moderated interviews | Deep context and reasoning |

| Can users complete a task successfully? | Usability testing | Task completion rates, friction points |

| How widespread is a behavior or opinion? | Survey | Quantitative patterns across a large sample |

| How do users interact with a product over time? | Diary study | Longitudinal behavioral data |

Research questions are not the same as the questions you will ask participants in an interview or survey. They are the knowledge gaps your study needs to close. Each research question should connect directly back to the decision you defined in Step 1.

Strong research questions are specific, answerable, and tied to a single topic. Use this template to write yours:

Aim for three to five research questions per study. If your list grows beyond five, split the study into two separate efforts. A tightly scoped set of questions produces cleaner data and gives you findings your stakeholders can act on immediately.

With your methods and research questions locked in, the next part of building a solid user research plan is deciding who you need to talk to and how you will actually run the study. Skipping this step leads to recruiting the wrong people, missing sessions, and collecting data you cannot use because you forgot to get consent.

Your participant criteria should describe the specific person who can meaningfully answer your research questions. Vague criteria like "our users" produce unreliable data because different users have wildly different contexts. If your study is about onboarding, you want people who signed up within the last 30 days, not power users who have been on the platform for two years.

The tighter your participant criteria, the more trustworthy your findings will be.

Write a short screener that filters candidates before you schedule them. Use this template as a starting point:

| Screener field | Example answer that qualifies |

|---|---|

| Job title or role | Product manager, founder, or operations lead |

| Product usage | Signed up within the last 30 days |

| Company size | 10 to 200 employees |

| Prior experience | Has not used a direct competitor product |

Aim for five to eight participants for qualitative studies like interviews or usability tests. Research consistently shows that this range surfaces the majority of usability issues without producing diminishing returns on your time investment.

Logistics and ethics are not afterthoughts. They are the infrastructure that keeps your study running on schedule and keeps your participants protected. Before you send a single recruiting message, work through this checklist:

Handling consent and data storage correctly also protects your company. If you collect recordings, be transparent about how they will be used and give participants the right to withdraw at any time.

The final step in your user research plan is where the preparation pays off. Running sessions well and translating raw data into clear, actionable findings is what separates research that shapes decisions from research that lives in a folder nobody opens.

Before your first session starts, prepare a discussion guide that lists your research questions as prompts, not scripts. A discussion guide keeps you on track without making the conversation feel mechanical. Start each session the same way: introduce yourself, explain the purpose of the study, confirm consent, and remind participants that you are testing the product, not them. This framing reduces anxiety and produces more honest responses.

Consistency across sessions is what makes your findings comparable. If you change your approach mid-study, you cannot trust that differences in responses reflect real user behavior rather than how you ran the session.

Use this structure for each session:

| Phase | What to do | Time |

|---|---|---|

| Opening | Introduce the study, confirm consent | 5 min |

| Warm-up | Ask background questions | 5 min |

| Core tasks | Work through your research questions | 30-40 min |

| Debrief | Ask for overall impressions | 5 min |

After your sessions wrap up, synthesize your notes before the details fade. Set aside time within 48 hours of your last session to review recordings or notes and tag recurring themes. Affinity mapping works well here: write each observation on a separate note, group similar observations together, and label each cluster. This process turns scattered data into patterns your team can act on.

Your final deliverable should match what your stakeholders actually need. Most teams produce one of three formats:

Whichever format you choose, lead with the decision rather than the methodology. State what you learned, what it means for the product roadmap, and what you recommend your team do next.

You now have everything you need to build and run a user research plan that produces findings your team can actually act on. Start small if this is your first structured study: pick one decision that is coming up in the next sprint, write a focused objective, and run five interviews. One focused study will teach you more about your users than six months of guessing.

The research process does not end when your study wraps up. The best product teams pair research with ongoing feedback collection so insights from studies reinforce what users are telling you day to day. When you connect both streams, patterns become impossible to ignore and prioritization gets easier.

If you want a central place to collect, organize, and act on user feedback between studies, start a free trial with Koala Feedback and see how teams use it to keep users at the center of every product decision.

Start today and have your feedback portal up and running in minutes.